What Is Claude AI and How Does It Work?

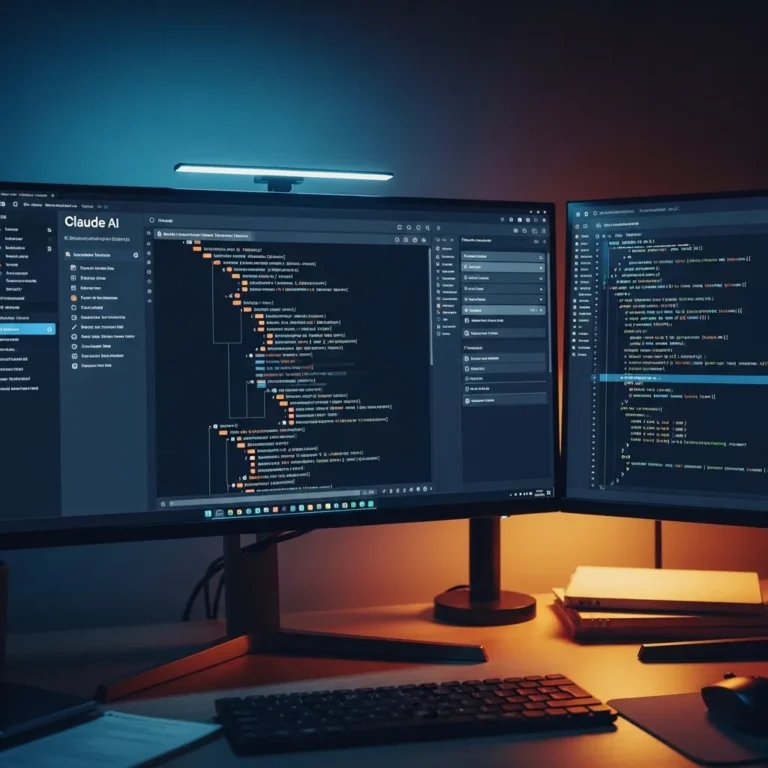

The artificial intelligence landscape has gotten crowded lately. Between ChatGPT’s meteoric rise, Google’s Bard (now Gemini), and a dozen other contenders, it’s hard to keep track of who’s building what. But there’s one AI assistant that’s been quietly making waves among developers, researchers, and professionals who take their AI interactions seriously: Claude.

I first encountered Claude about a year and a half ago when a developer friend wouldn’t stop raving about how “different” it felt compared to other chatbots. At the time, I was skeptical—another AI assistant promising to be smarter, safer, and more helpful? Sure. But after spending considerable time testing it, reading the research papers, and watching how it’s evolved, I’ve come to understand why Claude has carved out its own space in this competitive field.

Let me walk you through what Claude actually is, how it works under the hood, and why it matters in the current AI ecosystem.

What Exactly Is Claude AI?

Claude is a large language model (LLM) developed by Anthropic, an AI safety company founded in 2021 by former OpenAI researchers, including Dario and Daniela Amodei. Think of it as a conversational AI assistant—similar in concept to ChatGPT or Google’s Gemini—but built with some fundamentally different priorities baked into its design.

When you interact with Claude, you’re essentially communicating with a sophisticated neural network that’s been trained on vast amounts of text data. It can handle a wide range of tasks: writing and editing, analysis, coding, math, research assistance, creative projects, and general question-answering. What sets it apart isn’t just what it can do, but how it’s been designed to do it.

The current versions available as of early 2026 include Claude 4 Opus (the most capable), Claude 4 Sonnet (balanced performance and speed), and Claude 4 Haiku (fastest and most compact). This tiered approach lets users choose the right tool for their specific needs—you wouldn’t use a sledgehammer to hang a picture frame, after all.

The Anthropic Philosophy: Safety-First AI Development

To understand Claude, you need to understand Anthropic’s foundational approach. The company emerged from concerns about AI alignment—essentially, how do we build AI systems that genuinely understand and follow human intentions, especially as these systems become more powerful?

This isn’t just theoretical hand-wringing. The Amodei siblings and their team left OpenAI partly because they wanted to prioritize safety research more heavily. They’ve published extensively on something called Constitutional AI (CAI), which is the secret sauce behind Claude’s behavior.

Unlike traditional reinforcement learning from human feedback (RLHF) alone, Constitutional AI trains the model using a set of principles—a “constitution”—that guides its responses. These principles address harmlessness, helpfulness, and honesty. The model learns to critique its own outputs and revise them according to these principles before presenting them to users.

In practice, this means Claude tends to be more thoughtful about potentially harmful requests, more willing to admit uncertainty, and generally more careful in its phrasing. Some users find this refreshing; others find it occasionally over-cautious. It’s a tradeoff Anthropic has consciously made.

How Claude Actually Works: A Technical Overview (Without the Jargon Overload)

Let’s pull back the curtain a bit. Large language models like Claude are built on transformer architecture—the same fundamental technology that powers most modern AI language systems. But that’s like saying all cars have engines; the real differences lie in the details.

The Training Process

Claude’s development happens in several stages:

Stage 1: Pre-training

The model is exposed to enormous datasets containing books, articles, websites, code repositories, and other text sources. During this phase, it learns patterns in language—grammar, facts, reasoning patterns, and even some degree of common sense. It’s essentially developing a statistical model of how language works and how concepts relate to each other.

This isn’t memorization in the traditional sense. The model develops weights and parameters (billions of them) that encode patterns. When you ask Claude a question, it’s not searching a database for the answer; it’s generating a response based on these learned patterns.

Stage 2: Constitutional AI Training

Here’s where things get interesting. Rather than relying solely on human feedback, Claude generates responses, critiques them against its constitutional principles, and revises them. This process happens millions of times with AI feedback before human reviewers ever enter the picture.

For example, if an initial response might be harmful or misleading, the model learns to recognize this through self-critique based on principles like “Choose the response that is least likely to encourage or enable harmful behavior” or “Choose the response that sounds most similar to what a thoughtful, honest person would write.”

Stage 3: Reinforcement Learning from Human Feedback

Human reviewers then evaluate responses, helping fine-tune the model’s outputs. But because the constitutional approach has already done significant work, this human feedback can focus on more nuanced aspects of quality and helpfulness.

The Context Window Breakthrough

One area where Claude has genuinely pushed boundaries is its context window—the amount of information it can actively work with in a single conversation. The Claude 4 models can handle up to 200,000 tokens, which translates to roughly 150,000 words or about 500 pages of text.

I’ve tested this personally by uploading entire research papers, lengthy codebases, and even short books. Claude can actually reference specific details from early in the document while discussing later sections, maintaining coherence across truly massive amounts of information. This isn’t just a party trick—it’s genuinely useful for professionals working with complex documents, lengthy contracts, or large datasets.

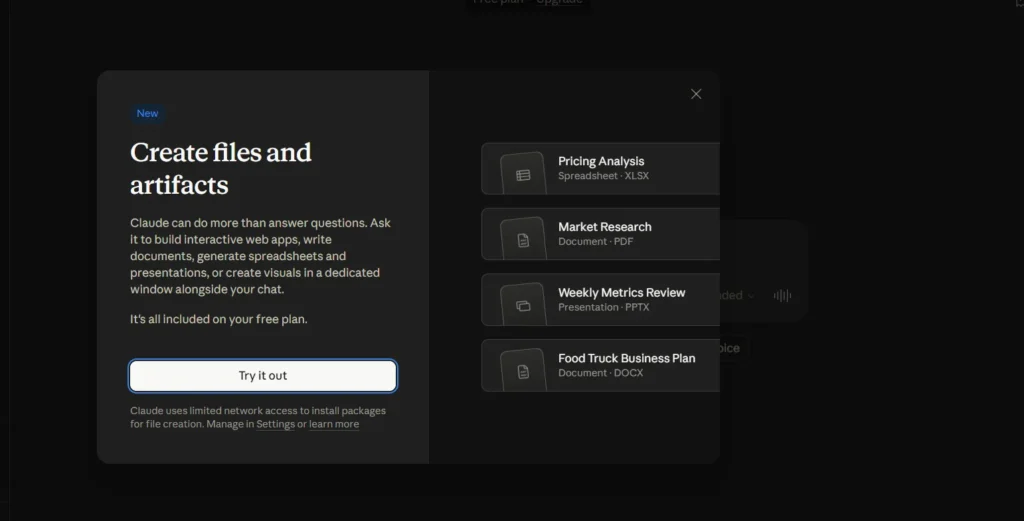

Multimodal Capabilities

The Claude 3 family introduced vision capabilities, meaning the model can now process and analyze images alongside text. You can upload a photo of a whiteboard diagram, a chart, a screenshot, or even a meme, and Claude can interpret it, extract text from it, and discuss it contextually.

This multimodal approach reflects where AI is heading generally—away from siloed text-only or image-only systems toward more integrated understanding that mirrors how humans actually process information.

What Makes Claude Different in Practice?

I’ve spent considerable time with various AI assistants, and while they’re all impressively capable, Claude does feel different in specific ways:

Communication Style

Claude tends toward longer, more thorough responses. Where ChatGPT might give you a concise answer, Claude often provides context, caveats, and nuance. This can be exactly what you want for complex topics, or occasionally more than necessary for simple questions. It’s like asking a professor versus asking a quick-thinking colleague—both valuable, different contexts.

Handling Ambiguity and Uncertainty

One thing I’ve noticed is Claude’s willingness to express uncertainty. Rather than confidently spouting nonsense (a problem that plagues AI systems, sometimes called “hallucination”), Claude more frequently acknowledges when it’s unsure or when a question has no clear answer.

For instance, if you ask about recent events beyond its training data, it’ll typically tell you directly rather than making things up. This isn’t perfect—no AI system is—but it’s noticeably better than some alternatives in my testing.

Code Generation and Technical Tasks

For developers, Claude has developed a strong reputation. The model seems particularly good at understanding context when working with code, explaining technical concepts clearly, and debugging. I’ve watched programmer friends use it for everything from writing test suites to explaining legacy code they’ve inherited.

The Claude 3 Opus model, in particular, scored competitively with GPT-4 on various coding benchmarks, and many developers report they prefer Claude’s coding style and explanations.

Creative Writing and Content

This is more subjective, but Claude’s writing often has a distinct voice—somewhat formal, thorough, and earnest. For professional content, analysis, or technical writing, this works well. For casual, punchy marketing copy or highly creative fiction, you might find it occasionally stiff.

That said, with the right prompting and direction, it’s quite adaptable. I’ve seen it produce everything from children’s stories to technical documentation to philosophical essays with varying degrees of success.

Real-World Applications: Who’s Actually Using Claude?

The practical applications span a surprisingly wide range:

Legal and Compliance Work

Law firms and corporate legal departments use Claude to analyze contracts, research case law, and draft documents. That massive context window means uploading entire contracts for analysis rather than feeding them in pieces.

Research and Academic Work

Researchers use it to summarize papers, extract data from studies, generate literature reviews, and even help formulate hypotheses. The ability to process long documents while maintaining accuracy makes it particularly valuable here.

Software Development

Beyond individual developers, some companies integrate Claude (via Anthropic’s API) into their development workflows for code review, documentation generation, and technical support.

Content Creation and Editing

Writers and editors use it for drafting, revision suggestions, fact-checking, and restructuring content. I know several professional writers who use it as a sophisticated thinking partner rather than a replacement for their own writing.

Customer Support

Companies integrate Claude into customer service systems, where its more careful, thorough responses can be preferable to quicker but potentially incomplete answers.

Education

Educators use it to generate practice problems, create lesson plans, and provide personalized tutoring support, though this remains controversial and requires thoughtful implementation.

The Limitations and Honest Drawbacks

Let’s be real: Claude isn’t perfect, and no AI system currently is. Here are the legitimate limitations I’ve encountered:

Knowledge Cutoff

Like other AI models, Claude’s training data has a cutoff date. It doesn’t know about events, research, or developments after that point unless you provide that information. The specific cutoff varies by version, but it’s typically several months behind the current date.

Occasional Over-Caution

The safety measures sometimes result in Claude declining reasonable requests or adding unnecessary caveats. It’s occasionally like working with someone who’s so worried about saying the wrong thing that they overthink simple questions.

Computational Cost

The most capable version (Opus) is computationally expensive, which translates to usage limits and cost for API users. This is true across the industry, but worth noting.

Hallucination Risk

Despite improvements, Claude can still generate plausible-sounding but incorrect information, especially on obscure topics or when asked to provide specific facts it’s uncertain about. Always verify important information, especially factual claims.

Not Actually Understanding

This is philosophical but important: Claude doesn’t “understand” in the human sense. It’s pattern matching at an incredibly sophisticated level, but it lacks consciousness, genuine reasoning, or real-world experience. It’s a tool, not a thinking being.

How Claude Compares to the Competition

It’s impossible to discuss Claude without addressing the elephant in the room: how does it stack up against ChatGPT, Gemini, and others?

Versus ChatGPT/GPT-4:

These are probably the closest competitors. GPT-4 is extremely capable and has wider name recognition. Claude tends toward more verbose, careful responses while GPT-4 can be punchier. Some users prefer Claude for coding and analysis; others prefer GPT-4 for creative tasks. Honestly, they’re close enough that preference often comes down to communication style and specific use cases.

Versus Google Gemini:

Gemini has strong integration with Google’s ecosystem and excellent multimodal capabilities. Claude generally feels more focused as a conversational assistant, while Gemini benefits from Google’s vast resources and search integration.

Versus Open-Source Models:

Models like Llama, Mistral, and others offer transparency and customizability that closed models like Claude can’t match. However, they typically require more technical expertise to deploy and may not match Claude’s performance on complex tasks (though this gap is narrowing).

The reality? For most professional applications, several of these tools are “good enough” that the choice depends on specific requirements, ecosystem integration, pricing, and personal preference.

Privacy and Data Considerations

This is where things get important. Anthropic has made specific commitments about data handling:

- Conversations through the consumer interface (claude.ai) are not used to train models without explicit permission

- API users’ data is not used for training

- The company emphasizes privacy in its public communications

That said, you should never share genuinely sensitive information—passwords, personal financial data, confidential business information—with any AI system unless you fully understand and accept the risks and have verified the security measures in place.

For enterprise users, Anthropic offers additional privacy and security guarantees, but as with any cloud service, you’re ultimately trusting the provider’s infrastructure and policies.

The Future of Claude and What’s Coming

Anthropic continues to develop Claude rapidly. Based on their research trajectory and public statements, we can expect:

- Continued increases in capability and context handling

- Better multimodal integration

- More specialized versions for specific industries

- Enhanced reasoning and mathematical capabilities

- Improved factual accuracy and reduced hallucination

The company has also been transparent about pursuing “scalable oversight”—methods for humans to effectively supervise AI systems even as they become more capable than humans at specific tasks. This research focus suggests they’re thinking long-term about AI safety, not just next quarter’s features.

Should You Use Claude? And How?

If you’re working with text-heavy professional tasks, need to analyze long documents, want thoughtful coding assistance, or simply prefer a more verbose, careful AI assistant, Claude is worth trying. The free tier at claude.ai gives you reasonable access to test it out.

For developers, the API provides flexible integration options, though you’ll want to compare pricing with alternatives based on your specific usage patterns.

A few practical tips from my experience:

- Be specific in your requests. Claude responds well to clear, detailed prompts.

- Use the context window. Don’t hesitate to upload long documents; it’s built for this.

- Iterate and refine. If the first response isn’t quite right, explain what you want differently.

- Verify important information. Use it as a starting point or thinking partner, not an oracle.

- Choose the right model tier. You don’t always need Opus; Sonnet or Haiku may be faster and cheaper for simpler tasks.

Final Thoughts

Claude represents a particular philosophy in AI development—one that prioritizes safety, thoughtfulness, and capability in roughly that order. It’s not the flashiest AI assistant, and it won’t always give you the punchiest answer, but for professional work requiring nuance, depth, and reliability, it’s become a genuinely useful tool.

The broader significance goes beyond just one product. Anthropic’s work on constitutional AI and alignment research contributes to industry-wide efforts to build AI systems that are not just powerful, but actually helpful and safe. Whether their specific approach becomes the standard or merely one contribution among many, this research matters for the field’s future.

As these systems become more integrated into our work and lives, understanding not just what they can do but how they’re built and what tradeoffs they embody becomes increasingly important. Claude isn’t just “another chatbot”—it’s a specific set of choices about how AI should be developed and deployed.

For now, it sits comfortably among the top tier of AI assistants, with particular strengths that make it the preferred choice for many professionals, even if it doesn’t grab headlines like some competitors. And honestly? That matches Anthropic’s style pretty well—serious, thoughtful, and focused on the work rather than the hype.