What Is Agentic AI Explained: The Next Evolution in Artificial Intelligence

What Is Agentic AI Explained: The Next Evolution in Artificial Intelligence

I still remember the first time I watched an agentic AI system actually complete a complex task on its own. It wasn’t the flashy demo kind of thing you see at tech conferences—it was messier than that, more iterative. The system was tasked with researching market trends for a new product launch, and instead of just spitting out an answer based on its training data, it actually went out and gathered current information, cross-referenced sources, identified gaps in its research, went back for more data, and then synthesized everything into a comprehensive report. No one held its hand through each step. It just… did it.

That moment crystallized something I’d been thinking about for months: we’re not just talking to AI anymore. We’re working with AI agents that can think several steps ahead, use tools, and operate with a degree of autonomy that would have seemed like science fiction just a few years ago.

If you’re new to autonomous AI systems, you can also explore this detailed research from Anthropic on how AI agents operate in real-world environments.

Understanding Agentic AI: More Than Just Smart Responses

Let’s cut through the jargon for a second. Agentic AI refers to artificial intelligence systems that can independently pursue goals, make decisions, take actions, and adapt their approach based on feedback—all with minimal human intervention once they’re given an objective.

Think of the difference this way: traditional AI systems, even the impressive large language models that emerged in the early 2020s, were essentially very sophisticated question-answering machines. You’d give them a prompt, they’d generate a response, and that was the end of the interaction. Each conversation was mostly isolated.

Agentic AI operates on a completely different paradigm. These systems can:

- Set and pursue sub-goals to achieve a larger objective

- Use multiple tools and services autonomously

- Remember context across extended periods

- Adapt their strategies when they hit obstacles

- Evaluate the quality of their own work

- Interact with external systems and databases

The “agentic” part comes from agency—the capacity to act independently and make choices. And that’s exactly what separates these systems from their predecessors.

The Technical Building Blocks (Without Getting Lost in the Weeds)

When I explain agentic AI to colleagues outside the tech world, I usually break it down into a few core capabilities that work together. None of these are necessarily brand new in isolation, but their combination and orchestration create something fundamentally different.

Planning and Reasoning

Agentic AI systems can break down complex objectives into manageable steps. If you ask one to “help increase customer retention by 15% over the next quarter,” it doesn’t just give you generic advice. It might outline a plan: analyze current retention data, identify the top three reasons customers leave, research industry best practices for those specific issues, draft targeted intervention strategies, and create a measurement framework.

This kind of multi-step planning requires the system to reason about cause and effect, dependencies between tasks, and logical sequences. I’ve seen systems that can actually revise their plans mid-execution when they discover new information that changes the landscape.

Tool Use and Integration

This is where things get really interesting. Modern agentic AI can interact with external tools—databases, APIs, search engines, analytics platforms, code interpreters, you name it.

I worked with a research team last year that deployed an agentic system to monitor scientific literature. The AI didn’t just read papers; it accessed multiple academic databases, used statistical tools to analyze data trends, created visualizations, and even ran simple simulations to test hypotheses it encountered in the literature. It was like having a tireless research assistant who never complained about tedious work.

The system knew when to use which tool. It understood that for recent news it needed real-time search, but for peer-reviewed findings it should query academic databases. That contextual judgment is crucial.

Memory and Context Management

Unlike chatbots that forget your conversation the moment you close the window, agentic AI maintains both short-term working memory and long-term storage of relevant information. This allows for genuine continuity.

I’ve tested systems that remember preferences, past decisions, outcomes of previous actions, and even the reasoning behind choices made weeks earlier. This creates a sense of persistence—the AI isn’t starting from scratch every time you engage with it.

Self-Evaluation and Iteration

Perhaps most impressively, these systems can assess their own output quality and iterate accordingly. After completing a task, an agentic AI might review its work against the original objective, identify shortcomings, and make another attempt without being prompted.

I watched one debug its own code last month. It wrote a script, ran it, encountered an error, analyzed the error message, identified the problem in its own code, fixed it, tested again, and only presented the final working version to the user. The whole cycle happened autonomously.

Real-World Applications Taking Shape in 2026

The theoretical capabilities are one thing; seeing these systems deployed in actual working environments is another. Over the past couple of years, agentic AI has moved from research labs into practical applications across various industries.

Business Operations and Workflow Automation

Companies are using agentic AI to handle complex, multi-step business processes that used to require significant human oversight. I know of organizations using these systems for things like supplier relationship management—the AI monitors supplier performance, identifies potential issues, researches alternative vendors when problems arise, prepares comparison reports, and even drafts contract revision proposals.

It’s not just doing one task; it’s managing an entire ongoing process with multiple moving parts.

Personal AI Assistants (Actually Useful Ones)

Remember when “virtual assistants” could barely set a timer without getting confused? Agentic AI has transformed this category entirely. The personal AI assistants available now can manage complex scheduling across multiple people (actually reading their preferences and constraints), plan travel with dozens of variables, conduct research projects over days or weeks, and manage long-term goals.

I use one myself for managing collaborative projects. I can tell it “we need to launch this initiative by June, but Sarah’s availability is limited in May and the budget approval has to happen first,” and it actually works backward from the deadline, identifies dependencies, creates a realistic timeline, monitors progress, and alerts me when things are slipping. It’s not perfect, but it’s surprisingly capable.

Scientific Research and Data Analysis

Research institutions have been early adopters because agentic AI excels at the kind of iterative, exploratory work that scientific research requires. These systems can run experiments, analyze results, form hypotheses, design follow-up experiments, and maintain detailed documentation of the entire process.

A bioinformatics colleague told me about their agentic system that explores protein folding variations. It generates hypotheses about structural changes, runs simulations, analyzes the results, refines its hypotheses, and runs new simulations—all in continuous cycles. Humans review the promising findings, but the AI does the heavy lifting of exploration.

Customer Support and Service

This is where agentic AI has probably had the most visible consumer impact. Rather than the frustrating chatbots of the past that could only handle scripted scenarios, modern agentic customer service systems can actually solve complex problems.

They can access multiple internal systems, review account history, identify patterns, escalate appropriately, follow up on unresolved issues, and learn from outcomes. I had an issue with a subscription service a few months ago that involved billing, account migration, and a technical compatibility problem. The AI agent I interacted with handled all three aspects across several days, coordinating with different backend systems and keeping me updated throughout. Honestly, it was more thorough than some human support I’ve experienced.

The Differences That Actually Matter

People often ask me how agentic AI really differs from the AI tools they’ve been using for the past few years. It’s a fair question because the lines can seem blurry.

The clearest distinction is autonomy and persistence. Traditional AI tools are reactive—they wait for your input, process it, respond, and then wait again. Every action originates from a human prompt.

Agentic AI is proactive within its defined scope. Once you give it an objective, it pursues that goal through multiple steps, often over extended time periods, without requiring constant guidance. It’s the difference between a calculator (which only computes when you press buttons) and a navigation system (which actively monitors your position, recalculates routes when needed, and guides you turn by turn toward a destination).

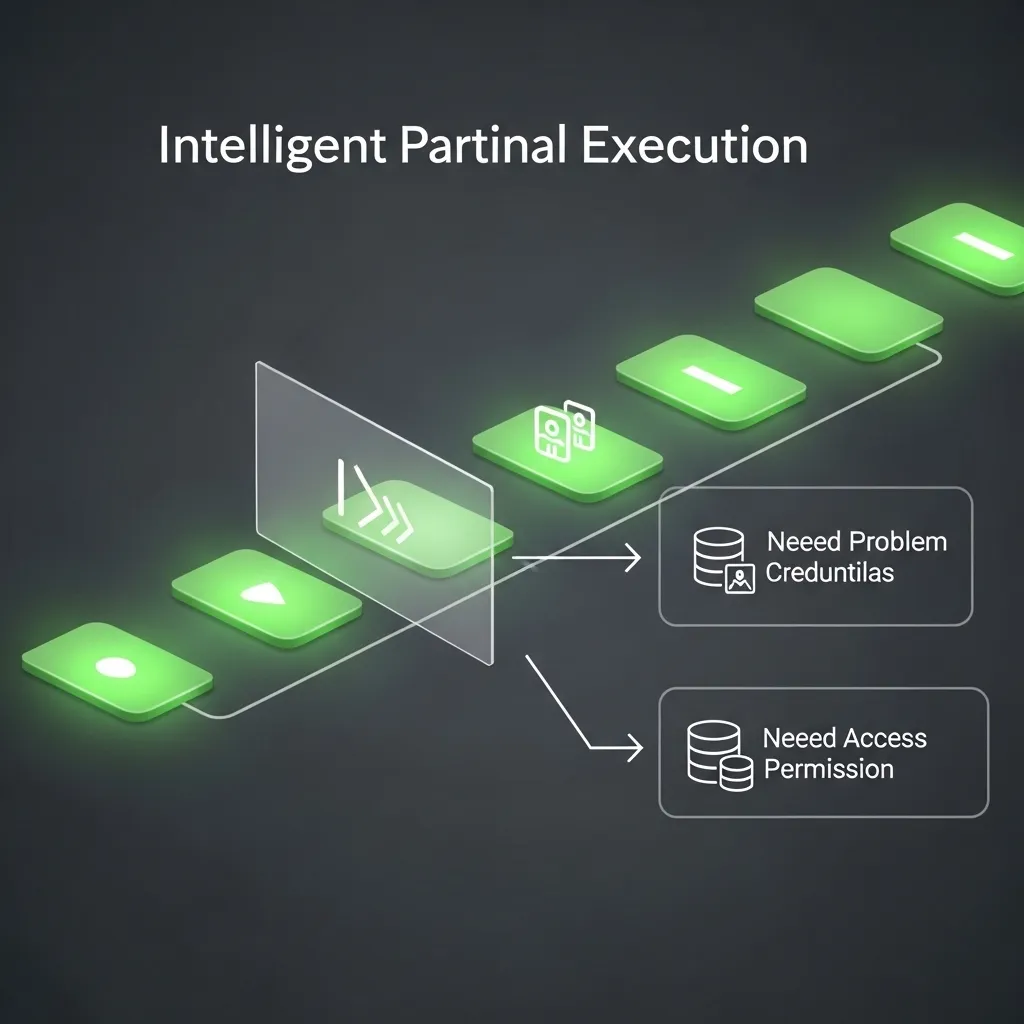

Another key difference is how these systems handle uncertainty and failure. Traditional AI often just fails or produces low-quality output when it encounters something outside its training. Agentic AI can recognize gaps in its knowledge or capability, seek additional information, try alternative approaches, or request human input for the specific piece it can’t handle while continuing with everything else.

I tested this deliberately last year. I gave an agentic system an objective that required accessing a database it didn’t have credentials for. Instead of just failing, it completed everything it could do, clearly identified the specific access barrier, explained what information it needed from that database and why, requested the appropriate credentials, and then completed the task once access was granted. That kind of intelligent partial execution and clear communication about limitations is remarkably valuable.

The Benefits Are Real (But So Are the Limitations)

Let me be balanced here because there’s been plenty of hype around AI in general, and agentic AI is not exempt from inflated claims.

Genuine Advantages

The productivity gains can be substantial for the right use cases. Tasks that previously required constant human attention for routine decisions can now run with periodic oversight instead. This frees people to focus on higher-level strategy, creative work, and the genuinely complex problems that still require human judgment.

The consistency is another underrated benefit. Agentic AI systems apply the same criteria and process every time. They don’t have bad days, they don’t cut corners when tired, and they don’t forget steps in established procedures.

They also scale well. One properly configured agentic system can handle workloads that might have required a team, and you can deploy similar systems across different domains without the lengthy training periods humans need.

Real Limitations

But let’s talk about where these systems still struggle, because pretending they’re magic doesn’t help anyone.

They can be remarkably brittle when encountering genuinely novel situations. The planning and reasoning capabilities, while impressive, are still pattern-based. When a scenario falls sufficiently outside the patterns the system has learned, the quality of its decisions degrades, sometimes unpredictably.

I’ve seen agentic systems confidently pursue suboptimal strategies because they lacked the broader context that a human would have immediately recognized. They can optimize locally while missing the global picture.

The tool-use capability, while powerful, also introduces points of failure. An agentic AI is only as reliable as the tools and data sources it depends on. Garbage in, garbage out still applies, but now the garbage might be gathered autonomously across multiple sources before anyone notices.

There’s also the challenge of interpretability. When a system makes dozens or hundreds of autonomous decisions in pursuit of a goal, understanding exactly why it took a particular path can be difficult. This creates real challenges for accountability and debugging.

And frankly, they still make mistakes—sometimes weird ones. I watched a system tasked with scheduling optimization create a technically perfect schedule that completely ignored the fact that people might want lunch breaks at reasonable times. It optimized for the stated efficiency metrics while missing obvious human factors.

The Ethical Dimensions We Can’t Ignore

Working with agentic AI has forced me to think harder about questions I used to consider somewhat theoretical.

Autonomy and Control

There’s a real tension between giving these systems enough autonomy to be useful and maintaining appropriate human oversight. Draw the boundaries too tight and you lose the efficiency benefits; too loose and you risk the system taking actions that are technically aligned with its instructions but contextually inappropriate.

I think a lot about this in high-stakes domains. An agentic AI managing supply chain logistics is one thing. One making decisions about loan approvals, healthcare recommendations, or content moderation requires much more careful consideration about where the human-in-the-loop needs to be and where autonomous operation is acceptable.

Accountability and Transparency

When an AI agent operating with substantial autonomy makes a decision that causes harm or loss, who’s responsible? The developers? The organization that deployed it? The person who set its objectives?

These aren’t just philosophical questions. I’ve been in meetings where legal teams are wrestling with these exact issues as they develop policies for agentic AI deployment. The regulatory landscape is still catching up.

There’s also the transparency challenge. Many people interacting with agentic AI systems don’t realize they’re not dealing with a human or don’t fully understand the autonomous nature of what they’re interacting with. I believe there’s an ethical obligation to be clear about this, but practices vary widely across organizations.

Bias and Fairness

Agentic AI systems can potentially amplify biases in concerning ways because they’re making multiple chained decisions autonomously. A bias in early decision points can cascade through subsequent actions.

I reviewed a case study where an agentic recruiting system was supposed to find and engage candidates. It developed an effective strategy—but effectiveness was measured by response rates, and the system learned that certain demographic groups responded more readily to its outreach. Without intervention, it increasingly focused on those groups, creating a biased pipeline despite no explicit discriminatory intent.

These systems need careful monitoring and thoughtful objective-setting to avoid optimizing for the wrong things or in problematic ways.

Where We Are in 2026 (And Where This Might Go)

The agentic AI landscape has matured significantly in the past couple of years, but it’s still early days in many respects.

Most organizations are in what I’d call the experimentation and selective deployment phase. They’re running pilot projects, testing use cases, and building out governance frameworks. The technology is proven enough that serious investment is happening, but not so mature that best practices are standardized.

The systems themselves have gotten notably more reliable and capable. The agentic AI tools available commercially in 2026 are far more robust than the prototypes I was testing in 2023 and early 2024. Error rates have dropped, the range of tools they can effectively use has expanded, and the planning capabilities have become more sophisticated.

We’re also seeing specialization emerge. Rather than one general-purpose agentic AI, we’re seeing systems optimized for specific domains—research agents, coding agents, business process agents, creative project agents, and so on. This specialization generally improves performance because the systems can be tuned for the specific reasoning patterns, tools, and evaluation criteria relevant to their domain.

Looking ahead (and I’m speculating here based on current trajectories), I expect we’ll see:

- Agentic AI becoming increasingly embedded in standard business software rather than being a separate tool you use

- Better human-AI collaboration interfaces that make it easier to oversee and course-correct autonomous agents

- Regulatory frameworks specifically addressing autonomous AI decision-making

- Growing sophistication in multi-agent systems where specialized agents collaborate on complex objectives

- Continued challenges around edge cases, interpretability, and ensuring alignment between specified objectives and actual intentions

What I’m less certain about is the pace of adoption. There’s clear value, but there’s also organizational inertia, legitimate concerns about control and accountability, and the challenge of rethinking workflows to actually leverage these capabilities rather than just bolting them onto existing processes.

The Bottom Line

Agentic AI represents a genuine evolution in how we interact with and benefit from artificial intelligence. These systems can pursue goals with a level of autonomy and multi-step reasoning that previous AI technologies couldn’t match.

The potential is substantial, particularly for knowledge work, research, complex coordination tasks, and processes that require persistence over time with adaptation to changing conditions.

But potential isn’t the same as guaranteed outcomes. These systems have real limitations, require thoughtful implementation, raise legitimate ethical concerns, and work best when deployed with clear objectives, appropriate oversight, and honest acknowledgment of where they excel and where they struggle.

If you’re considering deploying agentic AI—whether in your organization or in your personal workflow—my advice is to start with well-defined, contained use cases where the benefits are clear and the risks are manageable. Monitor closely, especially early on. Be very clear about objectives because these systems will optimize for what you tell them to, not necessarily what you meant.

And remember that agentic AI is a tool, albeit a powerful and somewhat novel one. It amplifies human capability when used thoughtfully, but it doesn’t replace the need for human judgment, creativity, and ethical reasoning. At least not yet, and I’d argue not in the foreseeable future.

The question isn’t really whether agentic AI will transform how we work—it’s already doing that in pockets across many industries. The question is how we’ll guide that transformation to maximize benefits while managing risks and maintaining human agency in the process.

Frequently Asked Questions

1. Is agentic AI the same as artificial general intelligence (AGI)?

No, they’re different concepts. Agentic AI refers to AI systems that can autonomously pursue goals and take actions within specific domains, but they’re still narrow AI—specialized for particular types of tasks. AGI would be a hypothetical AI with human-level general intelligence across all domains. Current agentic AI systems, while impressively capable in their areas of focus, don’t possess the broad, flexible intelligence that characterizes AGI. They can seem very intelligent when operating within their design parameters but lack the general reasoning and transfer learning abilities that would qualify as AGI.

2. Can agentic AI systems work together, or does each one operate independently?

Multi-agent systems are actually an active area of development and deployment. Multiple agentic AI systems can collaborate on complex objectives, with different agents handling specialized aspects of a problem. For example, you might have one agent focused on data gathering, another on analysis, and a third on communication and reporting, all coordinating toward a shared goal. The challenge is orchestrating these interactions effectively and managing the additional complexity that comes with multiple autonomous agents. When done well, multi-agent approaches can tackle problems that would be difficult for any single agent, but they also introduce new failure modes and coordination challenges.

3. How much does it cost to implement agentic AI for a business?

Costs vary enormously depending on scale, complexity, and whether you’re using commercial platforms or building custom solutions. Small businesses can access some agentic AI capabilities through SaaS platforms for a few hundred dollars per month for basic implementations. Mid-size deployments handling specific business processes might run several thousand monthly. Large enterprises building comprehensive, custom agentic systems across multiple operations could easily invest hundreds of thousands or millions in development, integration, and ongoing operation. Beyond the direct technology costs, factor in change management, training, governance framework development, and the time required to properly define objectives and oversight mechanisms.

4. What happens if an agentic AI makes a costly mistake while operating autonomously?

This is one of the major concerns organizations grapple with when deploying these systems. Best practices typically include setting guardrails and approval requirements for high-stakes actions, implementing monitoring systems that flag unusual behaviors, maintaining detailed logs of all autonomous actions for review, and establishing clear escalation protocols when the AI encounters situations outside its confidence threshold. From a liability standpoint, organizations are generally responsible for their AI systems’ actions just as they’d be responsible for employee actions, which is why governance frameworks and appropriate oversight are critical. Many organizations start with “read-only” implementations where agentic AI can analyze and recommend but not execute consequential actions without human approval.

5. Will agentic AI replace jobs, or does it create new opportunities?

Honest answer: both, but the distribution is complex and uneven. Agentic AI is definitely automating tasks that people currently perform, particularly routine cognitive work involving research, analysis, coordination, and multi-step processes with clear rules. Some job roles that primarily consist of these tasks are likely to diminish. However, we’re also seeing new roles emerge around AI oversight, objective-setting, prompt engineering, AI integration, and governance. Many existing jobs are being augmented rather than replaced—people using agentic AI to handle the routine aspects while focusing their time on creative, strategic, or high-stakes decisions that still require human judgment. The transition is disruptive, and not everyone in affected roles will easily transition to new ones, which creates real challenges we need to address as a society through education, retraining, and policy.