AI Automation Trends 2026: What’s Actually Happening Beyond the Hype

AI Automation Trends 2026: What’s Actually Happening Beyond the Hype

I’ve been watching AI automation evolve since the ChatGPT moment in late 2022, and I can tell you the landscape in 2026 looks nothing like what most people predicted three years ago. Some technologies we thought would dominate have fizzled. Others that seemed like minor features have become fundamental to how businesses operate.

I’m writing this in March 2026, working with about a dozen clients across industries, testing new tools almost weekly, and watching patterns emerge that feel genuinely different from the previous waves of automation we’ve seen over the past decade.

This isn’t going to be a breathless “AI will change everything!” piece. We’re past that phase. AI automation has changed quite a bit, but in specific, practical ways—not the sci-fi transformations tech evangelists promised. Let me walk you through what’s actually happening right now and where this seems to be heading.

The Shift From Task Automation to Process Orchestration

The biggest change I’m seeing in 2026 isn’t faster automation or smarter algorithms—it’s scope. We’ve moved beyond automating individual tasks to automating entire business processes across multiple systems.

Let me give you a concrete example from a client in professional services. Two years ago, they used automation to handle specific tasks: schedule appointments, send follow-up emails, generate invoices. Each automation was isolated—do this one thing when triggered.

Now? They’ve got what their vendor calls an “orchestration layer” (I’m less fond of the terminology than the functionality). When a new client signs a contract, AI doesn’t just execute a series of tasks. It manages an entire onboarding process that adapts based on context.

The system reads the contract to understand scope and deliverables, creates a customized project in their management software, assigns team members based on skills and availability (not just rules, but analyzing past performance on similar projects), generates a communication plan with specific touchpoints, sets up the financial tracking, and schedules the kickoff meeting at a time that AI determines is optimal based on everyone’s calendar patterns and work rhythms.

What’s different from previous automation? It’s not following a rigid if-this-then-that flowchart. It’s making contextual decisions at each step. When conflicts arise—two team members both needed for different projects—it doesn’t just flag an error. It analyzes priority, timeline flexibility, and suggests solutions or escalates to a human with relevant context.

This kind of cross-system, decision-making automation has moved from experimental to mainstream in the past year. I’d estimate 60-70% of the mid-sized B2B companies I work with have implemented at least one process orchestration like this, compared to maybe 10% in early 2025.

Autonomous Agents Are Here (Sort Of)

You’ve probably heard about “AI agents” that can complete complex goals with minimal human intervention. The reality in 2026 is more modest than the hype but more useful than the skeptics expected.

I’ve been testing several agent platforms—AutoGPT evolved significantly, new entrants like Lindy and Orby emerged, and established players like UiPath and Automation Anywhere added agentic capabilities to their RPA platforms.

Here’s what actually works: Agents handling bounded domains with clear success criteria. I deployed an agent for a client’s customer research function. Its job: monitor specific industry sources, identify relevant trends, compile weekly briefings, and flag items requiring immediate attention.

Does it work? Mostly, yes. The agent runs continuously, processes hundreds of sources weekly, and produces briefings that are genuinely useful about 75% of the time. The other 25% requires human editing or are off-target enough that we discard them.

Where agents still struggle: Ambiguous goals, novel situations, and anything requiring genuine creativity or strategic judgment. I tested an agent that was supposed to “improve our social media presence.” It posted content, responded to comments, and ran engagement campaigns. Technically functional, but strategically directionless. Engagement went up, but it was the wrong kind of engagement—quantity without quality.

The trend I’m seeing: Companies are deploying agents for specific, measurable functions (research monitoring, data entry and validation, routine customer inquiries, report generation) while maintaining human oversight for strategy and quality control.

One interesting development in late 2025 was the emergence of “agent managers”—humans whose primary job is supervising multiple AI agents rather than doing the work directly. I know three people whose job titles literally changed from “Analyst” or “Coordinator” to “Agent Manager” or “AI Supervisor.” That’s a real shift in how work is structured.

Personalized Automation Is Getting Weirdly Good

This might be the trend I find most personally impactful and slightly unnerving. AI automation tools are increasingly adapting to individual work styles rather than forcing everyone into the same workflows.

I use Motion for scheduling and task management. When I started using it two years ago, it was rules-based—you set preferences, it followed them. Now? It’s learned patterns I didn’t explicitly program.

It knows I’m more effective on deep work between 9 AM and noon, so it protects that time fiercely, scheduling meetings in the afternoon when possible. It’s learned that I tend to underestimate how long writing tasks take but overestimate administrative work, so it adjusts time blocks accordingly. When I reschedule tasks repeatedly, it recognizes I’m avoiding something and will either reschedule it to a time when I historically have better follow-through or flag it for me to reconsider whether the task is actually important.

I didn’t teach it any of that explicitly. It inferred patterns from months of behavior.

This personalization is appearing across automation tools:

- Email tools that learn which messages you respond to quickly vs. which you defer, and triage accordingly

- Writing assistants that adapt to your style quirks and preferences without explicit style guides

- Research tools that surface information types you actually use rather than generic “relevant” results

- Meeting schedulers that understand your preferences better than you articulate them

A marketing director I work with uses an AI social media tool that’s learned her brand voice well enough that she can’t consistently distinguish her own posts from AI-suggested content in blind tests. That’s both impressive and a little strange.

The privacy implications are real—these tools are analyzing massive amounts of behavioral data. Most people don’t fully grasp how much their automation tools know about their work patterns, communication style, and decision-making tendencies.

Voice and Conversational Interfaces Are Finally Not Terrible

I’ve been skeptical about voice interfaces for years. Alexa and Siri convinced me that voice AI was perpetually “promising” but never quite useful for serious work.

That’s changing. The quality jump in conversational AI over the past 18 months has been substantial.

I’m now using voice interfaces for automation tasks I would’ve dismissed as impractical two years ago. During client meetings, I verbally tell my AI assistant to create follow-up tasks, schedule next steps, and add details to my CRM. It understands context well enough that I don’t have to speak in robotic commands.

Instead of: “Add task ‘send proposal’ due March 15th category client work priority high”

I say: “Remind me to get the proposal to them by the middle of next week, this is pretty urgent”

And it correctly creates the task with appropriate due date, priority, and context. It’s fast enough that it doesn’t disrupt conversation flow.

I watched a colleague use voice to manipulate spreadsheets while presenting—”highlight the Q3 revenue column, now show me year-over-year growth, filter for products over $50K revenue”—with maybe 90% accuracy. That would’ve been science fiction three years ago.

The driver seems to be newer language models with better contextual understanding combined with multimodal inputs (they can see what’s on your screen, not just hear your words).

Adoption is still early—I’d guess 15-20% of knowledge workers are using voice for substantive automation tasks, not just setting timers and playing music. But it’s growing fast. The quality crossed some threshold where it’s reliable enough for real work.

The limitation: It still struggles in noisy environments and with heavy accents or non-standard speech patterns. A colleague with a pronounced accent finds voice interfaces frustrating. Accessibility improvements are uneven.

Small, Specialized Models Are Outperforming General Purpose AI

This trend runs counter to the “bigger is better” narrative that dominated AI development for years.

Throughout 2025 and into 2026, I’ve watched smaller, domain-specific AI models outperform massive general-purpose models for particular use cases.

Example: A healthcare client needed AI to process medical intake forms and flag potential issues. We initially tried fine-tuning GPT-4 and Claude. Results were okay but not great—too many false flags, occasional critical misses.

Switched to a specialized medical AI model trained specifically on healthcare documentation (about 1/10th the size of general models). Accuracy improved by roughly 30%, and it ran faster and cheaper.

I’m seeing this pattern across industries. Legal AI for contract review, financial AI for fraud detection, manufacturing AI for quality control—specialized models trained on domain-specific data are often beating general-purpose AI that technically has more parameters and broader training.

The practical implication: Companies are increasingly building or buying vertical AI solutions rather than trying to adapt horizontal tools. The AI automation landscape is fragmenting from “ChatGPT for everything” to “the right specialized tool for each function.”

This creates new challenges—integration complexity, multiple vendor relationships, managing different interfaces. But the accuracy and reliability improvements are significant enough that people are accepting that complexity.

Regulatory Compliance Automation Is Suddenly a Big Deal

This wasn’t on my radar as a major trend until mid-2025, but regulatory compliance automation has exploded.

Multiple factors converged: The EU AI Act implementation began in earnest, several U.S. states passed AI regulation, industry-specific rules proliferated, and companies realized they couldn’t manually track compliance for their expanding AI usage.

I’ve worked with three clients implementing “AI governance platforms” in the past six months. These tools automatically inventory what AI systems a company uses, classify them by risk level, track data usage, document decision-making processes, and generate compliance reports.

For a financial services client, this system monitors their AI automation tools to ensure they’re not introducing bias in lending decisions, tracks what data each AI accesses, maintains audit logs, and alerts when any system behaves outside defined parameters.

It’s automation to manage automation—very meta, slightly dystopian, but practically necessary for regulated industries.

The vendors in this space barely existed 18 months ago. Now it’s a competitive category with dozens of solutions. Every enterprise software vendor is adding compliance features to their AI offerings because it’s becoming a dealbreaker for procurement.

What surprised me: Smaller companies are also adopting this, not because they’re required to but because they want to get ahead of regulations they expect are coming. That’s unusual—companies typically drag their feet on compliance until forced. The proactive approach suggests people genuinely expect regulatory scrutiny to intensify.

Physical World Automation Is Accelerating (Outside of Self-Driving Cars)

We’ve heard about autonomous vehicles for a decade with limited practical deployment. The automation trend I’m actually seeing in the physical world is more boring but more real: warehouses, agriculture, inspection, and delivery.

I consulted for a logistics company implementing warehouse automation in Q4 2025. They deployed AI-guided robots for inventory management, picking, and packing. Not humanoid robots doing human jobs—specialized machines optimized for specific tasks.

What’s new in 2026 versus previous warehouse automation: The AI adapts to changing conditions without reprogramming. When they rearranged the warehouse layout, the robots figured it out. When they added new product types, the system learned to handle them. When seasonal demand shifted, the AI reoptimized routing and workflow.

Previous automation would’ve required manual reconfiguration for each change. This adapts autonomously, making it practical for more dynamic environments.

I’m seeing similar patterns in:

Agriculture: AI-guided equipment for precision farming—planting, watering, and harvesting adapted to real-time conditions. A farmer I know deployed a system that monitors individual plants (literally thousands of them) and makes micro-adjustments to watering based on each plant’s needs. His water usage dropped 30%, yield increased 12%.

Inspection and Maintenance: Drones and robots with AI vision inspecting infrastructure, detecting problems, and in some cases performing minor repairs. A facilities manager showed me footage of a drone autonomously inspecting a warehouse roof, identifying three areas needing repair that visual inspection had missed.

Last-Mile Delivery: Not the humanoid delivery robots you see in tech demos, but specialized autonomous vehicles operating in controlled environments. A hospital deployed AI-driven carts moving supplies between departments. A corporate campus uses outdoor autonomous delivery for inter-building mail.

These aren’t replacing all human workers (a claim I’m tired of hearing). They’re handling specific tasks—often dangerous, tedious, or physically demanding work—while humans focus on oversight, exceptions, and judgment calls.

The “Automation Stack” Is Becoming Standard

This time last year, most companies had scattered automation tools that didn’t talk to each other. In 2026, we’re seeing standardization around integrated automation platforms.

The typical mid-sized company I work with now has what they call their “automation stack”:

- Workflow automation layer (Zapier, Make, or enterprise RPA)

- AI reasoning layer (ChatGPT Enterprise, Claude, or Gemini for Business)

- Data integration middleware (MuleSoft, Workato, or similar)

- Industry-specific automation tools (varies by vertical)

- Governance and monitoring (newer category, emerging vendors)

These tools are increasingly designed to work together. APIs are more standardized. Data flows more smoothly. You can build an automation in one tool that calls AI from another, pulls data from a third, and logs everything in a fourth without extensive custom coding.

This standardization is making automation more accessible to companies without large IT teams. I’m seeing 20-person companies implementing sophisticated automation that would’ve required enterprise resources two years ago.

The downside: Vendor lock-in and stack complexity. Companies are building critical workflows dependent on third-party platforms that could change pricing, features, or shut down. I’ve helped two clients in the past year migrate automation stacks when a vendor changed terms unfavorably. It’s painful.

AI Is Automating AI Development (Yes, Really)

This trend feels recursive in a way that’s both fascinating and concerning. AI tools that build and optimize other AI tools are moving from research labs to practical deployment.

I watched a developer use Cursor (an AI coding editor) to build a custom automation tool in about 1/5th the time traditional coding would’ve required. Not just using autocomplete—the AI understood the requirements, suggested architecture, generated code, debugged issues, and optimized performance.

More striking: Tools that automatically fine-tune AI models, optimize prompts, and even design new neural network architectures. A data scientist I know used to spend days tuning models for specific tasks. Now there are tools that automate that process, testing thousands of configurations and selecting optimal parameters in hours.

AutoML (automated machine learning) existed before, but the quality and accessibility in 2026 is different. Non-experts can deploy sophisticated custom AI models for specific use cases.

Example from a manufacturing client: They needed AI to detect defects in products. Previously would’ve required hiring specialized ML engineers, months of development, extensive tuning. Using AutoML tools, their existing IT team built a working system in three weeks. Accuracy is comparable to what specialists would’ve achieved.

This democratization is powerful but creates new risks. People deploying AI without fully understanding what they’re building or its limitations. I’ve seen poorly designed automation causing real problems because implementation was easy even though the conceptual framework was flawed.

Proactive Automation Is Getting Ahead of Problems

The shift from reactive to proactive automation might be the most significant capability change I’m seeing.

Traditional automation: Something happens (a trigger), automation responds (an action).

Proactive automation in 2026: AI predicts what will happen and takes action before the triggering event.

I’ll give you a specific case. An e-commerce client implemented predictive inventory automation. Instead of reordering products when stock hits a threshold (reactive), the AI predicts demand based on trends, seasonality, marketing campaigns, competitor activity, and dozens of other factors. It orders inventory before demand spikes.

The results were dramatic—they reduced stockouts by 68% while simultaneously reducing excess inventory by 40%. The AI was anticipating demand shifts before traditional metrics would’ve signaled them.

I’m seeing this pattern across applications:

Predictive Maintenance: Equipment monitored by AI that predicts failures before they happen, scheduling maintenance proactively. A manufacturing client reduced unplanned downtime by 55%.

Customer Churn Prevention: AI identifying customers likely to leave before they exhibit obvious signs, triggering retention efforts early. Success rate on preventing churn roughly doubled compared to reactive outreach.

Fraud Detection: Financial AI catching fraudulent patterns in their early stages rather than after damage is done.

Content Optimization: Marketing AI predicting which content will resonate and optimizing before publishing rather than adjusting based on post-publication performance.

The limitation is that predictions aren’t perfect. Acting on false positives wastes resources. I’ve seen companies struggle with calibrating how aggressively to act on AI predictions versus waiting for confirmation.

No-Code Automation Platforms Are Genuinely Useful Now

I was skeptical about no-code/low-code automation for years. Early platforms were limiting and broke easily. That’s changed substantially.

In 2026, I’m seeing non-technical people build sophisticated automation that would’ve required developers previously.

A marketing manager I know built an entire lead nurturing automation system using no-code tools. Captures leads from multiple sources, scores them based on behavior and fit, segments into different tracks, delivers personalized content sequences, hands off to sales when ready, tracks everything in analytics. Zero code written.

Could a developer have built something more optimized? Probably. But what she built works well, she can modify it herself when needs change, and it was operational in a week versus the months it would’ve taken to spec, develop, and deploy a custom solution.

The interface quality has improved dramatically. Visual workflow builders that are actually intuitive. AI assistants that help build automation (“I want to send personalized follow-ups to webinar attendees based on what questions they asked”). Templates and pre-built components that are genuinely useful, not just toys.

This democratization is reshaping who builds automation. It’s moving from IT projects to business function ownership. Marketing teams build their own automation. Sales builds theirs. Operations builds theirs. IT increasingly provides infrastructure and governance rather than building everything.

The risk: Proliferation of automation without central visibility or control. I’ve worked with companies that discovered they had 50+ separate automation workflows nobody had fully inventoried, creating maintenance nightmares and security gaps.

Ethical AI and Responsible Automation Are Table Stakes

This has shifted from nice-to-have to required. In early 2025, ethical AI was mostly lip service. By 2026, it’s influencing real decisions.

Clients now routinely ask about bias, fairness, transparency, and accountability when evaluating automation tools. Vendors that can’t answer these questions credibly are losing deals.

I’ve seen procurement processes where AI ethics and responsible automation were explicit evaluation criteria, weighted as heavily as functionality and cost. That was rare 18 months ago.

What this looks like practically:

- Bias testing for AI systems that impact people (hiring, lending, healthcare, criminal justice)

- Explainability requirements (can the AI explain its decisions?)

- Human-in-the-loop for high-stakes automation (AI recommends, humans approve)

- Data governance and privacy controls

- Impact assessments before deploying automation (who benefits, who’s harmed)

A healthcare client implemented an AI diagnostic assistance tool but built in mandatory human physician review before any clinical decision. The AI suggests, humans decide. It slows the process but maintains accountability and catches AI errors.

Not everyone is doing this well. There’s performative “ethics washing” where companies claim to care but don’t implement meaningful safeguards. But the trend is toward more serious engagement with these issues, driven by regulation, public pressure, and genuine recognition that unchecked automation can cause harm.

The Integration Nightmare Is Getting Better (Slowly)

One of the biggest practical obstacles to AI automation has been getting different systems to work together. That’s improving, though not as fast as I’d like.

APIs are more standardized. Common data formats are emerging. Major platforms (Salesforce, Microsoft, Google, SAP) are building integration layers designed for AI automation.

I spent weeks in 2024 trying to connect three different business systems so automation could work across them. When a similar need emerged in early 2026, it took days instead of weeks. Still not trivial, but meaningfully easier.

The rise of “integration platforms as a service” (iPaaS) designed for AI automation is helping. Tools that provide connectors to hundreds of common business applications, handle data transformation, and manage the complexity of keeping everything synced.

But we’re still far from seamless. Every automation project I work on still involves wrestling with integration issues. Different data formats, incompatible authentication systems, rate limits on API calls, version changes that break existing connections.

The dirty secret of automation: Maintenance. Every automation requires ongoing attention to keep working as connected systems evolve. Companies underestimate the maintenance burden and end up with broken automations they don’t discover until something important fails.

What’s Not Happening (Despite the Hype)

Let me be clear about trends that get tons of attention but aren’t meaningfully impacting most businesses yet:

AGI/Superintelligence: We’re nowhere close. Current AI is still narrow—good at specific tasks, not general intelligence.

Mass Job Displacement: Automation is changing jobs more than eliminating them. Yes, some roles are shrinking or disappearing, but we’re also seeing new roles emerge. The net employment impact is complex and varies by industry.

Fully Autonomous Operations: No company I work with has “lights out” automation running critical functions without human oversight. The idea of businesses running themselves with minimal human involvement isn’t close to reality.

Perfect AI: Systems still make mistakes, have biases, hallucinate, and fail in unexpected ways. Anyone claiming otherwise is lying or selling something.

The Skills Gap Is Real and Growing

Here’s an uncomfortable truth: The pace of AI automation advancement is outstripping most people’s ability to adapt.

I see this daily. People with strong traditional skills—analysts, coordinators, writers, even managers—finding that AI can now do substantial portions of their work. Not all of it, but enough to be threatening.

The workers thriving are those who’ve learned to work with AI automation—using it to amplify their capabilities rather than competing against it. But developing those skills takes time and effort that many people aren’t able to invest, especially if they’re already overwhelmed by their current work.

Companies are struggling with training. The technology changes too fast for traditional training programs. By the time a course is developed and deployed, the tools have evolved and the content is outdated.

I’m seeing a shift toward continuous learning cultures rather than one-time training. Companies that give people time and resources to experiment with new tools, share learnings, and gradually build capabilities.

But plenty of workers are being left behind. That’s a real social problem without easy answers.

Looking Forward: Where This Goes Next

Based on what I’m seeing in beta products and development roadmaps, here are trends I expect to accelerate:

Multimodal Automation: Systems that seamlessly work with text, voice, images, and video rather than being locked into single modalities. You’ll describe what you want verbally while showing examples visually, and automation will understand and execute.

Collaborative AI: Multiple AI systems working together on complex problems, each contributing different capabilities. Already emerging but will become standard.

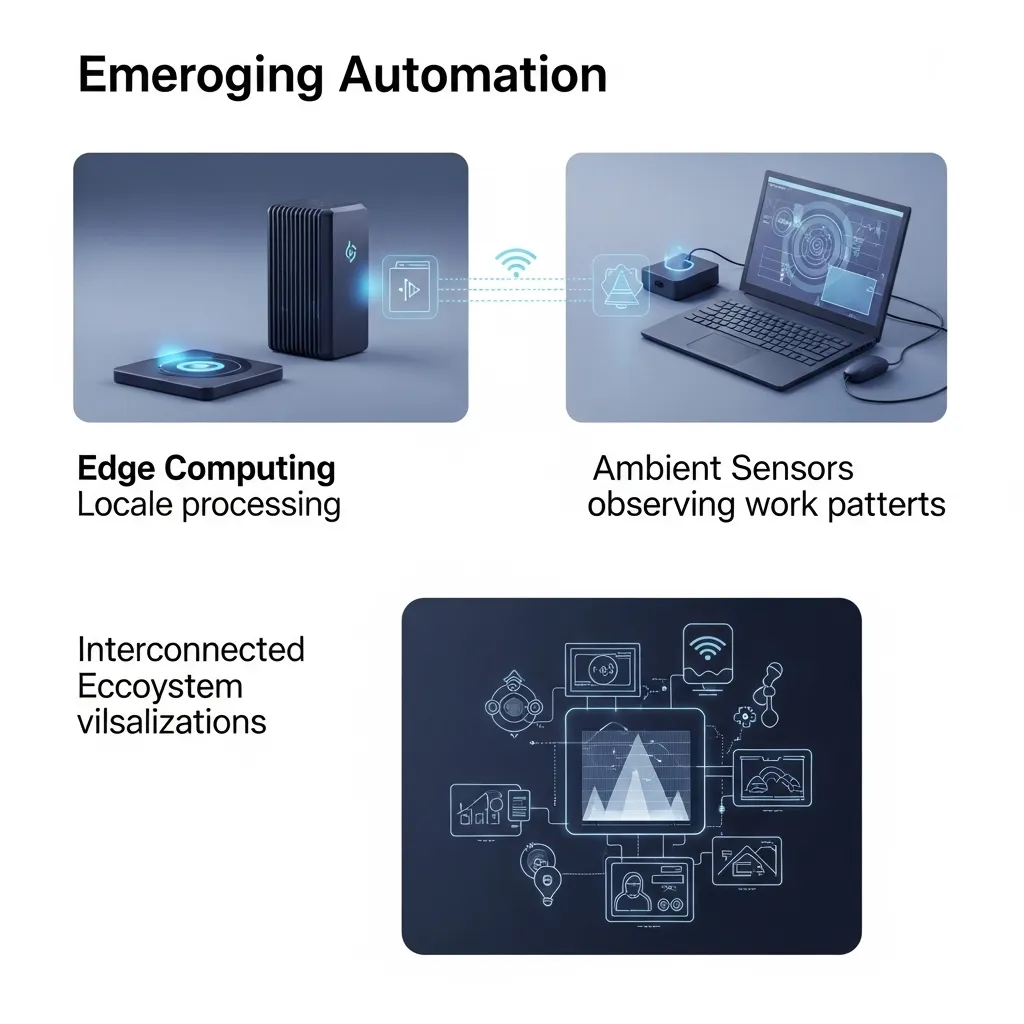

Edge AI: More automation running locally on devices rather than in the cloud, improving speed, privacy, and reliability. Relevant for manufacturing, healthcare, and anywhere latency or data privacy matters.

Ambient Automation: Systems that observe how you work and automatically create helpful automation without you explicitly building it. This is starting to appear and will likely expand.

Ecosystem Automation: Moving beyond individual company automation to automated processes spanning multiple organizations. Supply chain coordination, B2B transactions, collaborative workflows.

The Bottom Line: Measured Optimism

After working intensively with AI automation for several years, I’m optimistic about the potential but realistic about the challenges.

These tools are genuinely making certain work faster, cheaper, and sometimes better. The productivity gains are real for people and companies that implement thoughtfully.

But automation is a tool, not magic. It amplifies existing capabilities and strategies. If your strategy is flawed, automation executes that flawed strategy very efficiently. If your processes are broken, automating them just means they break faster.

The winners in 2026 aren’t those using the most advanced AI or automating the most aggressively. They’re the ones being thoughtful about where automation adds value, maintaining human judgment for what matters, and building systems that are reliable, ethical, and aligned with genuine business goals.

The gap between possibility and practice remains large. Everything I’ve described is technically possible, but most companies are implementing a fraction of it. Implementation challenges—technical, organizational, cultural—remain significant.

We’re in the middle of a genuine shift in how work happens. Not the overnight revolution promised by enthusiasts or the job-destroying apocalypse feared by critics. Something more gradual, more nuanced, and more interesting than either extreme.

That’s where we are in 2026. Ask me again in 12 months and I’ll probably tell you a substantially different story. The pace of change isn’t slowing.

Frequently Asked Questions

1. What AI automation trends should small businesses focus on in 2026?

Small businesses should focus on high-impact, low-complexity automation rather than trying to implement everything. Start with workflow automation connecting your existing tools (Zapier, Make), basic AI for content creation and communication (ChatGPT, Claude), and customer service automation if you have volume inquiries. Don’t chase advanced trends like autonomous agents or custom AI models—stick with proven, accessible tools that solve specific pain points. The best first automations are those handling repetitive, time-consuming tasks that don’t require complex judgment: appointment scheduling, email triage, social media posting, basic data entry, invoice processing. Get ROI from simple automation before moving to sophisticated implementations. Many small businesses waste time on flashy automation when basic workflow improvements would deliver more value.

2. Is AI automation going to eliminate most jobs by 2027-2028?

No, at least not based on current trajectories. What I’m seeing is job transformation rather than elimination. Roles are changing—tasks that were manual are automated, but new responsibilities emerge around managing, training, and optimizing automation. Some specific job categories are shrinking (basic data entry, simple content moderation, routine customer service), while others are growing (AI trainers, automation specialists, AI ethicists, agent managers). The bigger risk isn’t mass unemployment but a skills gap—workers whose skills don’t evolve struggling to remain relevant. Most jobs in 2026 involve some automation but aren’t fully automated. Humans handle strategy, creativity, complex problem-solving, relationship building, and oversight while AI handles routine execution, analysis, and repetitive tasks. The employment impact varies dramatically by industry and role—generalizations are misleading.

3. How much should companies budget for AI automation in 2026?

This varies wildly by company size and objectives, but I’ll give you realistic ranges. Small businesses (under 20 employees) should expect $200-1,000/month for meaningful automation—workflow tools, AI assistants, maybe one specialized solution. Mid-sized companies (50-500 employees) typically spend $2,000-15,000/month across multiple tools, integration platforms, and potentially custom development. Enterprises easily spend six or seven figures annually. But costs aren’t just tools—implementation time, training, maintenance, and integration work often cost more than software licenses. Budget for 2-3x your software costs in implementation and ongoing maintenance. Start small with proven ROI before scaling investment. The companies struggling aren’t those spending too little but those spending too much too fast on automation that doesn’t deliver value. Calculate ROI ruthlessly—how much time/money saved versus total cost including hidden expenses.

4. What are the biggest mistakes companies make with AI automation in 2026?

The biggest mistake is automating without strategy—implementing automation because it’s trendy rather than because it solves specific problems. Related mistakes: Automating broken processes (just makes problems happen faster), over-automating and removing necessary human judgment, under-investing in change management and training (technology works, people don’t adopt it), neglecting maintenance (automation breaks when systems change), chasing the newest tools rather than mastering basics, lacking governance (automation sprawl creates security and compliance risks), and failing to measure actual ROI. I’ve seen companies spend six figures on automation that saved maybe $20K in labor while creating new problems. Start with clear objectives, pilot before scaling, maintain human oversight for important decisions, invest in training, establish governance, and measure results honestly. Automation should solve real problems, not create impressive-sounding initiatives for board presentations.

5. How do you balance automation efficiency with maintaining human skills and judgment?

This is one of the hardest challenges in 2026. My approach: Identify tasks where automation clearly adds value without significant risk (repetitive, time-consuming, well-defined parameters) and automate those aggressively. Maintain human involvement in areas requiring judgment, creativity, ethics, or where errors have serious consequences. Implement “human-in-the-loop” systems for important decisions—AI recommends, humans approve. Rotate people through different roles so they maintain skills rather than only supervising automation. Invest in developing skills that complement rather than compete with AI—strategic thinking, creativity, emotional intelligence, complex problem-solving. Create feedback loops where humans review AI outputs and provide corrections that improve the system. Never automate something you don’t understand yourself—if automation breaks, someone needs expertise to fix it. The goal isn’t maximum automation; it’s optimal human-AI collaboration where each does what they’re best at.