AI Agents: A Beginner’s Guide to Understanding the Technology Reshaping How We Work

AI Agents: A Beginner’s Guide to Understanding the Technology Reshaping How We Work

I still remember the first time I encountered what we now call an AI agent. It was late 2023, and I was wrestling with a repetitive task—sorting through hundreds of customer emails, categorizing them, and routing them to the right departments. A colleague mentioned she’d set up something that “just handled it automatically” overnight. Not a simple filter or basic automation, but something that actually understood context, made judgment calls, and improved over time.

That was my introduction to AI agents, and honestly, I was skeptical. We’d all been burned by overhyped automation tools before. But here we are in 2026, and AI agents have become as commonplace in many workplaces as email clients and project management software. They’re not the sci-fi robots we imagined, but they’re transforming how we handle everything from customer service to data analysis.

If you’re just starting to explore what AI agents are and whether they might be useful for you, you’re in the right place. This guide cuts through the hype to give you a practical understanding of these systems—what they actually do, where they excel, and where they still fall short.

What Exactly Is an AI Agent?

Let’s start with the basics, because the term “AI agent” gets thrown around pretty loosely these days.

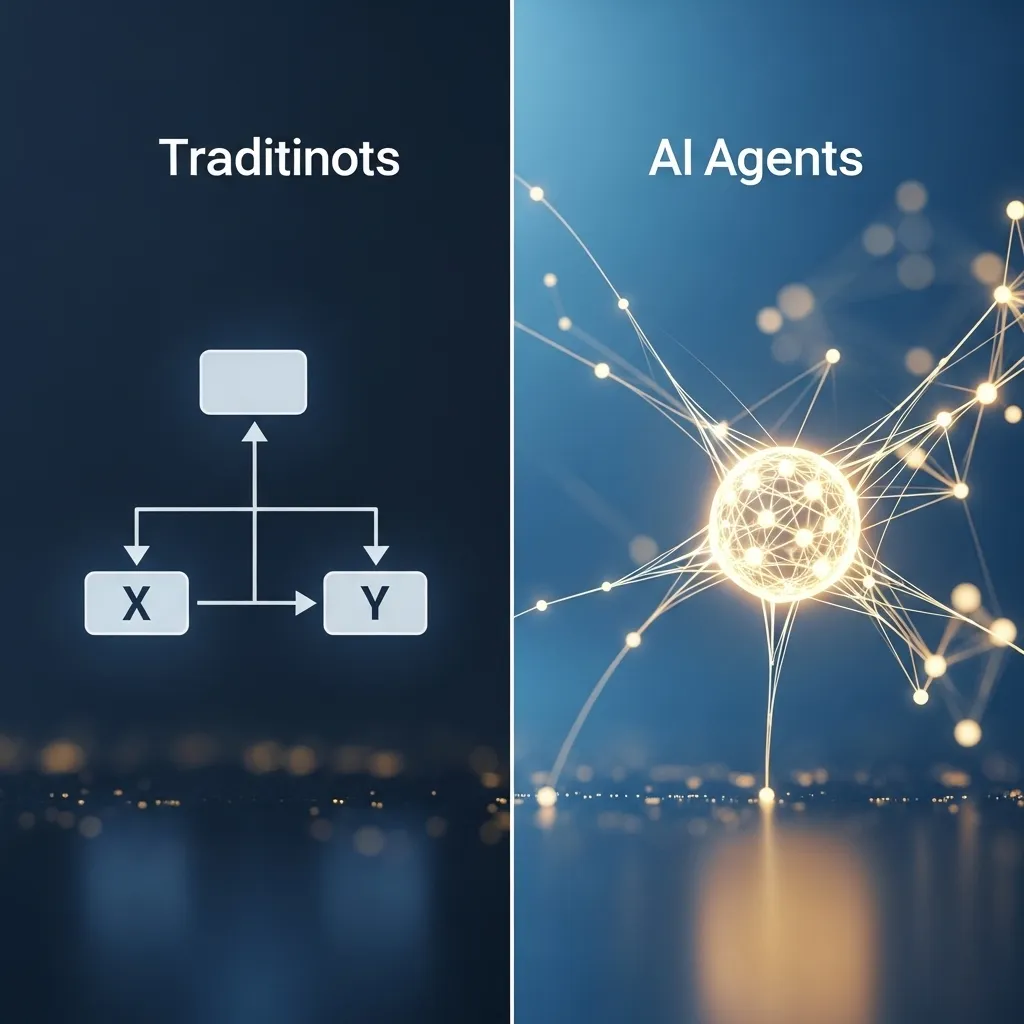

An AI agent is essentially a software program powered by artificial intelligence that can perceive its environment, make decisions, and take actions to achieve specific goals—with varying degrees of autonomy. The key difference between an AI agent and older automation tools is the ability to adapt, learn from context, and handle situations that weren’t explicitly programmed.

Think of it this way: A traditional chatbot follows a decision tree. If you say X, it responds with Y. An AI agent, on the other hand, understands the intent behind what you’re saying, considers multiple factors, and generates an appropriate response even if it’s never encountered that exact scenario before.

The “agent” part comes from the fact that these systems act on your behalf. You give them an objective, and they work toward it somewhat independently, making micro-decisions along the way.

The Core Components That Make AI Agents Work

When I first started digging into how these things actually function, I found it helpful to break them down into their essential parts:

Perception mechanisms allow the agent to take in information from its environment. This could be reading text, analyzing images, monitoring data streams, or even interpreting voice commands. In 2026, multimodal perception—where agents can process text, images, and audio simultaneously—has become standard in most commercial platforms.

Decision-making frameworks are where the “intelligence” happens. Modern AI agents typically use large language models (LLMs) or other machine learning models as their reasoning engine. These models evaluate the perceived information against their goals and determine what action to take next. The better ones incorporate what’s called “chain-of-thought” reasoning, essentially thinking through problems step by step rather than jumping to conclusions.

Action capabilities define what the agent can actually do. Some agents only output text responses. Others can execute code, make API calls to other software, schedule meetings, send emails, or even control physical devices. The trend I’ve noticed is toward “tool use”—agents that can access various software tools and services to accomplish tasks, rather than trying to do everything themselves.

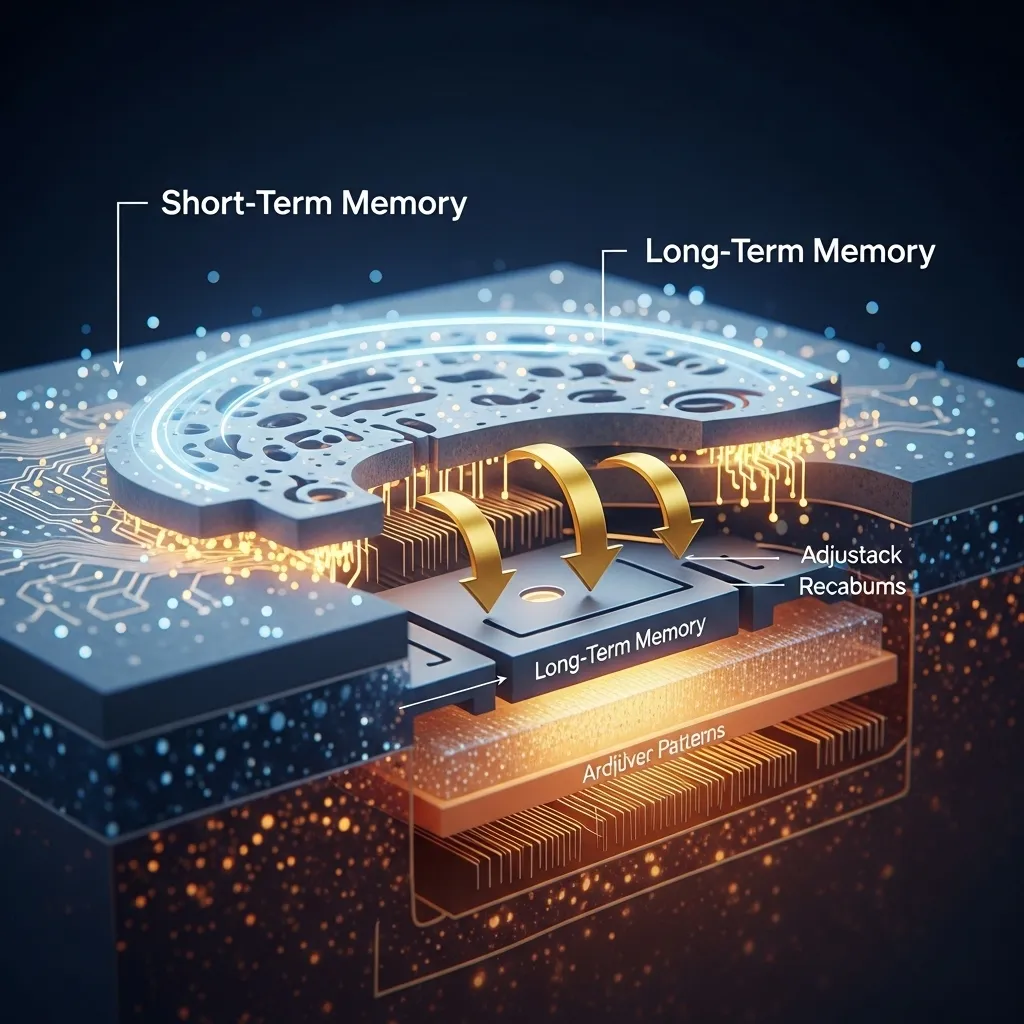

Memory systems help agents maintain context over time. Short-term memory keeps track of the current conversation or task. Long-term memory stores information about past interactions, user preferences, and learned patterns. This is an area that’s seen massive improvement lately; agents in 2026 are far better at remembering important details from weeks or months ago.

Feedback loops enable learning and improvement. The most effective agents incorporate user feedback, track their success rates, and adjust their behavior accordingly.

Types of AI Agents You’ll Actually Encounter

The AI agent landscape has evolved quickly, and different types serve different purposes. Here’s what you’re likely to come across:

Conversational Agents

These are probably the most visible type. They chat with users in natural language to provide information, answer questions, or guide people through processes. Customer service is the obvious use case—and by 2026, it’s become genuinely difficult to tell whether you’re chatting with a human or an agent in many support contexts.

But conversational agents have expanded far beyond customer service. I’ve watched companies deploy them for internal IT support, employee onboarding, and even as “thinking partners” for brainstorming and problem-solving.

The best conversational agents today don’t just answer questions; they ask clarifying questions, remember context from previous conversations, and can switch between topics naturally. They’re also getting better at knowing when to escalate to a human—a crucial skill that early versions sorely lacked.

Task Automation Agents

These agents are focused on getting stuff done. They might monitor your inbox and automatically schedule meetings based on email threads, or track project deadlines across multiple tools and alert team members when tasks are at risk.

I’ve been testing a few of these personally, and when they work well, they’re remarkable. I have one that monitors industry news sources, filters for topics relevant to my work, summarizes the key points, and compiles a digest every morning. It’s saved me hours of reading time each week.

The catch? They require careful setup. You need to define clear goals, grant appropriate permissions, and establish guardrails. An overly aggressive automation agent can make a mess if it’s not properly constrained.

Analytical Agents

These agents excel at processing large amounts of data and extracting insights. They can analyze sales patterns, identify anomalies in financial data, or spot trends in customer feedback that humans might miss.

What distinguishes analytical agents from traditional business intelligence tools is their ability to explore data more flexibly. Instead of running pre-built reports, you can ask questions in plain language: “Why did sales drop in the Northeast region last quarter?” The agent will query relevant data sources, look for correlations, and present findings with supporting evidence.

Financial services, healthcare, and research sectors have been early adopters. A friend who works in drug discovery told me their lab uses analytical agents to scan thousands of research papers and identify potential drug combinations worth investigating—work that would take human researchers months.

Creative Agents

This category has sparked the most debate, and honestly, the name is a bit misleading. These agents assist with content creation—writing, design, video production, music composition. They don’t replace human creativity so much as augment it, at least in most professional contexts.

I use creative agents regularly in my writing process, though not in the way many people assume. I don’t ask them to write articles for me. Instead, I might use one to quickly generate ten different headline options for a piece, or to reorganize an outline from a different structural angle. They’re particularly useful for overcoming blank-page paralysis.

The marketing world has embraced these tools enthusiastically. Entire campaigns now routinely involve agents generating ad copy variations, creating visual assets, and even personalizing messages for different audience segments.

Personal Assistant Agents

Think of these as the Swiss Army knives of AI agents. They combine elements of the other types to manage various aspects of personal or professional life. They might schedule your calendar, book travel, manage emails, track expenses, and research topics—all from conversational requests.

The vision of a true personal AI assistant has been promised for years, but 2026 is when it’s genuinely become practical for everyday users. The key breakthrough has been cross-platform integration. Modern assistant agents can interact with dozens of different apps and services, which makes them actually useful rather than just novelties.

How AI Agents Actually Learn and Improve

One question I get asked constantly: “Do these things really learn, or are they just fancy scripts?”

The answer is nuanced. Most commercial AI agents in 2026 use foundation models—large AI systems trained on vast amounts of data—as their base intelligence. These foundation models don’t learn from your individual interactions in real-time. The GPT, Claude, and Gemini models powering many agents have their knowledge frozen at a certain point.

However, agents implement several mechanisms that create the effect of learning:

Retrieval systems allow agents to access updated information beyond their training data. When you interact with an agent, it might query databases, search current documents, or access APIs to get fresh information relevant to your request.

Fine-tuning involves additional training on specific datasets to specialize the agent for particular tasks or domains. A medical AI agent, for example, might be fine-tuned on medical literature and clinical guidelines.

In-context learning happens within a conversation or session. As you interact with an agent, it builds up context in its working memory and adapts its responses based on what you’ve discussed. This context is usually cleared when the session ends, though some systems maintain conversation history.

Feedback incorporation is where actual learning happens over time. Many commercial agent platforms track user feedback (explicit ratings or implicit signals like whether you accepted a suggestion) and use this data to improve the underlying models or adjust behavior parameters.

Personalization layers store information about individual users’ preferences, communication styles, and frequent requests. This isn’t changing the AI model itself, but rather creating a profile that influences how the agent interacts with you specifically.

The honest truth? Most AI agents today are less about continuous learning and more about sophisticated pattern matching combined with access to the right information at the right time. But that combination is powerful enough to handle an enormous range of tasks.

Getting Started: Choosing Your First AI Agent

If you’re ready to actually try working with an AI agent, the options can be overwhelming. Here’s how I’d recommend approaching the decision:

Start with a specific problem. Don’t get an AI agent because they’re trendy. Identify a concrete pain point—repetitive tasks eating your time, information overload, customer inquiries you can’t keep up with. The best first experience with an agent is solving a real problem.

Consider your technical comfort level. Some platforms require coding and API configuration. Others offer no-code interfaces where you can set up agents through conversation and visual workflows. Be realistic about what you’re equipped to handle or willing to learn.

Evaluate integration needs. The most useful agents connect with your existing tools. Check whether the platform works with your email provider, CRM, project management software, and other critical systems. An agent that exists in isolation has limited value.

Understand the pricing model. Agent platforms typically charge based on usage—messages sent, API calls made, compute time used. Costs can escalate quickly if you’re not careful, especially with agents that run continuously. Many platforms offer free tiers that are perfect for experimentation.

Review data and privacy policies. This is critical and often overlooked. Understand what data the agent provider accesses, how it’s stored, and whether it’s used to train models. If you’re handling sensitive customer or business information, you may need agents that run on-premises or offer specific compliance guarantees.

Some platforms I’ve found beginner-friendly include:

ChatGPT’s GPTs and Assistants API (from OpenAI) offer relatively simple ways to create custom agents with specific instructions and knowledge bases. The interface is approachable for non-technical users, though more advanced functionality requires some coding.

Google’s Agent Builder (part of Vertex AI) has become surprisingly accessible. It provides templates for common use cases and decent documentation. It’s particularly strong if you’re already in the Google ecosystem.

Anthropic’s Claude with tool use has impressed me with its reasoning quality, though it requires a bit more technical setup to create true agents rather than just chatbots.

Microsoft Copilot Studio is worth considering if you’re embedded in the Microsoft world. The integration with Microsoft 365 tools is seamless, and it’s well-suited for business process automation.

Zapier Central targets the no-code crowd and lets you build agents that connect thousands of different services. It’s more limited in reasoning capability but incredibly practical for workflow automation.

Real-World Applications Making an Impact

Theory is fine, but let me share some examples of how AI agents are actually being deployed:

A regional healthcare network I consulted with implemented an agent to handle prescription refill requests. Patients can message or call, and the agent verifies the request, checks for any potential issues, routes to a pharmacist if needed, and processes straightforward refills automatically. It’s handling about 70% of requests without human intervention, freeing clinical staff for more complex patient needs.

An e-commerce company uses analytical agents to monitor their customer reviews across platforms. The agent identifies emerging issues (like a product defect that multiple customers mention), categorizes feedback themes, and alerts the relevant teams. Previously, this required staff to manually read through thousands of reviews weekly.

A friend running a small architecture firm deployed a personal assistant agent that manages his client communications. It doesn’t send emails on his behalf, but it drafts responses based on his writing style, pulls in relevant project information, flags urgent items, and keeps track of what he’s promised to various clients. He says it’s cut his email time by more than half.

I personally use task automation agents to manage my research workflow. When I’m working on a complex topic, I can ask an agent to find recent papers, summarize key findings, identify contradictory viewpoints, and even suggest experts I might interview. What used to take days of literature review now takes hours.

These aren’t flashy applications, but they’re solving real problems and delivering measurable value. That’s what mature AI agent deployment looks like in 2026.

Common Pitfalls and How to Avoid Them

I’ve made plenty of mistakes working with AI agents, and I’ve watched others stumble through similar issues. Here are the most common traps:

Expecting perfection. AI agents will make mistakes. They’ll misunderstand context, provide outdated information, or take actions you didn’t intend. Plan for this. Implement review mechanisms for important tasks. Don’t let agents make irreversible decisions without oversight.

Under-defining or over-defining tasks. Too vague, and the agent flails around ineffectively. Too rigid, and you’ve just built an inflexible script. Finding the right level of specificity takes iteration. Start with clear goals but flexible methods.

Ignoring the feedback loop. If you set up an agent and never review what it’s doing, you’re missing opportunities for improvement and risking drift from your intentions. Schedule regular check-ins to evaluate performance.

Inadequate testing. Always test agents in a sandbox or limited environment first. I once watched someone deploy a customer service agent that confidently provided completely incorrect information about return policies because it was working from outdated documentation. A simple testing phase would have caught this.

Privacy and security oversights. Be cautious about what data you feed to agents, especially those using external APIs. I’ve seen businesses inadvertently expose confidential information by pasting it into public AI platforms without thinking through the implications.

Neglecting the human element. When deploying agents that interact with customers or employees, communicate clearly about what’s happening. People appreciate transparency about whether they’re talking to an AI. And train your team on how to work alongside agents rather than being replaced by them.

The Ethical Dimensions You Should Consider

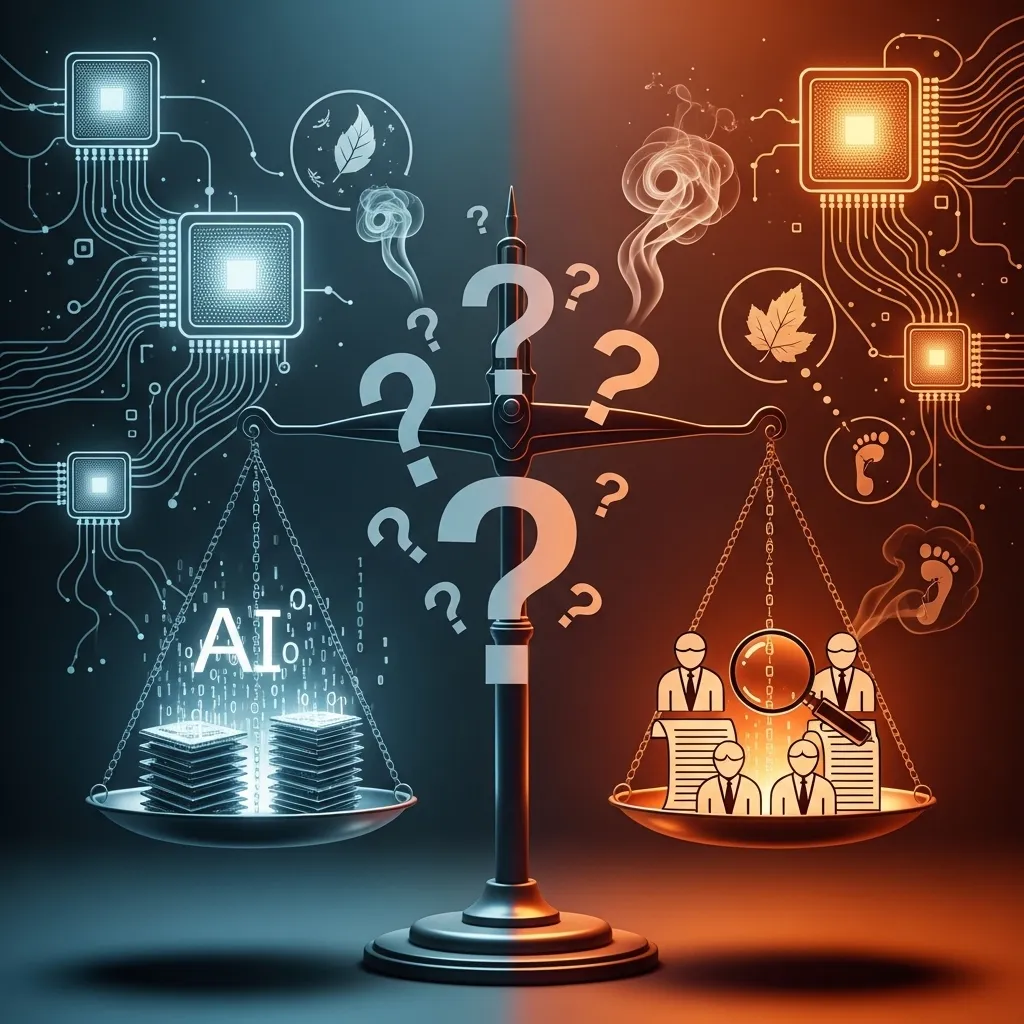

We can’t talk about AI agents responsibly without addressing some challenging ethical questions.

Transparency and disclosure matter. If an agent is making decisions that affect people—filtering job applications, assessing insurance claims, approving requests—those people deserve to know an AI is involved and have recourse if something goes wrong.

Bias amplification is a real risk. AI agents can perpetuate or even amplify biases present in their training data. If you’re using agents for consequential decisions, actively test for disparate impacts across different demographic groups.

Labor displacement is the elephant in the room. AI agents are automating work that people used to do. I don’t have easy answers here. In my observation, the pattern so far has been less about job elimination and more about job transformation—but that’s not universal, and certain roles are definitely at risk. If you’re deploying agents in an organization, think carefully about how to manage this transition humanely.

Accountability gets murky when AI agents are involved. If an agent makes a mistake with serious consequences, who’s responsible? The user who deployed it? The company that built it? The AI itself? We’re still figuring out these questions as a society, but you need to think through them for your specific use case.

Environmental impact often gets overlooked. Running sophisticated AI agents requires significant computational resources, which means energy consumption and carbon emissions. This doesn’t mean don’t use them, but be mindful about efficiency.

Looking Ahead: What’s Coming for AI Agents

Based on what I’m seeing in research labs and hearing from people building these systems, several trends are worth watching:

Multi-agent collaboration is the next frontier. Instead of one agent trying to do everything, we’re moving toward systems where multiple specialized agents work together. An agent focused on research might collaborate with one handling writing and another managing project timelines. The orchestration challenges are significant, but early experiments are promising.

Improved reasoning and planning capabilities are advancing rapidly. Today’s agents can handle multi-step tasks, but they still struggle with complex planning over extended timeframes. The next generation will be substantially better at breaking down ambitious goals, anticipating obstacles, and adapting plans as situations change.

Embodied agents—those that can interact with the physical world through robotics—remain mostly in research labs for now, but commercial applications in warehousing, delivery, and even home assistance aren’t far off.

Better personalization through more sophisticated memory and learning systems will make agents feel genuinely tailored to individual users rather than generic assistants with a thin customization layer.

Regulation and standards are inevitable. Governments worldwide are developing frameworks for AI governance, and AI agents will increasingly need to comply with specific requirements around transparency, safety, and fairness.

Final Thoughts on Starting Your AI Agent Journey

If I could offer one piece of advice for someone just getting started with AI agents, it would be this: approach them as tools, not magic.

They’re powerful tools, certainly. Tools that can save you time, handle complexity, and extend your capabilities in meaningful ways. But they’re tools that require thoughtful implementation, ongoing management, and realistic expectations.

Start small. Pick one specific use case. Experiment with a platform that matches your technical comfort level. Give yourself permission to fail and iterate. Pay attention to both the practical results and the broader implications of putting these systems to work.

The AI agent landscape is still evolving quickly. What’s true today might be outdated in six months. But the fundamental skills—understanding how to effectively direct these systems, evaluate their outputs, and integrate them thoughtfully into workflows—those will remain valuable regardless of how the technology progresses.

I’m genuinely excited about what well-implemented AI agents can do. They’re not going to solve all our problems or replace human judgment, but they’re already making certain kinds of work substantially more manageable. And honestly, we’re still just scratching the surface of what’s possible.

Frequently Asked Questions

Do I need programming skills to work with AI agents?

Not necessarily. It depends on what you’re trying to do. Many platforms now offer no-code interfaces where you can build and customize agents through visual workflows or even natural language conversations. These are perfectly suitable for common use cases like customer service bots, task automation, or personal assistants. However, if you want to build more sophisticated agents with complex integrations or custom capabilities, some programming knowledge—particularly Python and API interaction—becomes valuable. My recommendation is to start with no-code tools to understand the concepts, then explore coding if you need more advanced functionality.

How much does it cost to run an AI agent?

Pricing varies enormously depending on the platform and usage. Some services offer free tiers that are adequate for personal use or small-scale testing. As you scale up, costs typically depend on factors like the number of messages processed, computational resources used, or tokens (text chunks) processed. For context, a small business might spend anywhere from $50-500 monthly on an agent handling customer inquiries, while enterprise deployments can run into thousands. The key is to monitor usage carefully when you’re starting out—costs can creep up faster than expected if an agent is more active than you anticipated. Most platforms provide usage dashboards to help you track spending.

Are AI agents safe to use with confidential business information?

This requires careful evaluation. Consumer AI platforms that send data to external servers pose privacy risks for confidential information—your data might be stored, logged, or even used for model training. However, many enterprise platforms now offer options specifically designed for sensitive data: on-premises deployment, dedicated instances, compliance certifications (HIPAA, SOC 2, GDPR), and guarantees that your data won’t be used for training. If you’re handling confidential information, look for platforms with these enterprise features, review their security documentation carefully, and consider consulting with your IT security team. Never paste truly sensitive data into public AI interfaces without understanding exactly how it will be handled.

How do I know if an AI agent is performing well?

Good question, and one that many people neglect. Start by defining clear success metrics before deployment. For a customer service agent, you might track resolution rate (percentage of inquiries handled without human escalation), response accuracy, customer satisfaction scores, and time saved. For a task automation agent, measure tasks completed successfully versus errors, time savings, and whether it’s actually freeing you to focus on higher-value work. Most platforms provide analytics dashboards, but also implement your own monitoring—periodically review transcripts, outputs, or actions the agent has taken. And pay attention to qualitative feedback from users. Sometimes the most valuable insights come from asking people, “Is this actually helping you, or creating more work?”

Can AI agents replace human employees?

The honest answer is: sometimes yes, but usually not entirely. AI agents excel at handling repetitive, rule-based tasks and processing large volumes of standardized requests. They’re available 24/7 and don’t get tired. In these specific domains, they can indeed replace work that humans previously did. However, they struggle with situations requiring genuine creativity, emotional intelligence, complex ethical judgment, or navigating ambiguous circumstances without clear patterns. What I’m seeing more commonly is role transformation rather than elimination—humans move from doing repetitive tasks to handling exceptions, complex cases, and more strategic work. The agents handle volume; humans handle nuance. That said, this transition does create real challenges for workforce planning and individual careers that organizations need to manage thoughtfully and ethically.