Automating Blog Writing Using AI: What I’ve Learned After Writing 500+ Posts This Way

Automating Blog Writing Using AI: What I’ve Learned After Writing 500+ Posts This Way

Three years ago, I would’ve told you that AI-generated blog content was garbage—the kind of stuff that reads like a high schooler padded their essay to meet word count. Repetitive, generic, and about as engaging as reading a refrigerator manual. I was managing content for four different clients, personally writing everything, and slowly losing my mind trying to maintain quality while hitting publishing deadlines.

Then I started experimenting with AI blog automation. Not because I wanted to, honestly, but because I was desperate and curious in equal measure.

Now? I’d estimate that 70% of the blog content I publish has significant AI involvement. And before you write me off as a hack churning out soulless content, hear me out—because the reality of AI blog writing in 2026 is far more nuanced than either the hype or the criticism suggests.

What “Automating Blog Writing” Actually Means in Practice

Let me clear up a common misconception right away: Very few people are just pressing a button and publishing whatever an AI spits out. That’s a myth perpetuated by people who haven’t actually tried this or who did it poorly once and gave up.

When I talk about automating blog writing with AI, I’m describing a spectrum of involvement:

Light automation: AI handles research, outlines, and first drafts. Humans do heavy rewriting, adding expertise, personality, and examples. This is where I started.

Medium automation: AI generates most content with human-provided strategic direction, examples, and editing. This is where most professional content creators operate now.

Heavy automation: AI produces near-publish-ready content with minimal human input beyond topic selection and quick fact-checking. This works for certain content types but is riskier.

Full automation: AI does everything from topic ideation to publishing. This exists, and it’s exactly as problematic as you’d imagine for most use cases.

I operate mostly in the medium range, occasionally dipping into heavy automation for specific content types, and I’ll explain why that balance works.

The Tools I’ve Actually Used and What They’re Good For

I’ve tested probably fifteen different AI blog writing tools since 2023. Some were impressive. Some made me laugh at how bad they were. Here’s what’s actually worth your time in 2026:

ChatGPT Pro (the advanced tier released in late 2025) remains my daily workhorse. The jump in coherence and factual reliability from earlier versions was substantial. I use it primarily for research aggregation, outline generation, and first drafts on topics where I already have expertise.

For a client in the sustainable architecture space, I fed ChatGPT a dozen research papers, three interviews I’d conducted, and my rough notes. It produced a 2,500-word draft that captured about 75% of what I wanted to say. I spent maybe three hours reshaping it, adding personal anecdotes from the interviews, and punching up the introduction. The final piece got picked up by two industry publications. Total time: about 5 hours. Writing that from scratch would’ve taken me 12-14 hours.

Claude (Anthropic’s latest version) has become my go-to for anything requiring nuance or ethical sensitivity. I was writing a piece about healthcare AI for a medical device company, and Claude better understood the need for careful language around patient outcomes and regulatory considerations. It’s also noticeably better at maintaining consistent tone across long pieces—I’ve generated 4,000+ word articles where the conclusion doesn’t sound like it was written by a different person than the introduction.

Jasper evolved significantly from its early days. Their “Brand Voice” feature, which they refined throughout 2024-2025, learns your writing style from samples you provide. I fed it ten of my best-performing articles, and it now generates content that sounds reasonably like me—not perfectly, but close enough that editing feels like refining my own rough draft rather than translating someone else’s work. For a travel blog client, this cut production time by about 60% while maintaining the casual, story-driven style their audience expects.

Writesonic excels at SEO-focused content. If I need an article optimized around specific keywords without it reading like keyword-stuffed nonsense, this is where I go. Their semantic SEO analysis has gotten genuinely sophisticated—it identifies related topics, questions to address, and content gaps better than I can manually. A recent piece about “commercial solar installation” that Writesonic helped structure ranks #3 for the primary keyword and #1-5 for about a dozen related terms. The secret? It suggested covering financing options, maintenance requirements, and ROI calculations—angles I hadn’t planned to include but that searchers clearly wanted.

Koala Writer (which emerged as a serious contender in 2025) specializes in long-form, research-heavy content. It can process multiple sources, cite them properly, and maintain factual accuracy better than most tools. I’ve used it for several white papers and in-depth guides where credibility matters immensely. It’s not cheap—about $250/month for the tier I use—but for certain clients, it’s worth every penny.

Frase remains my favorite for content optimization rather than pure generation. It analyzes top-ranking content for your target keywords and shows you what topics, questions, and terms to cover. I often use Frase for the strategic framework, then feed that framework to ChatGPT or Claude for the actual writing.

My Current Workflow: What Works After Hundreds of Articles

I’ve refined this process through lots of trial and error. Your mileage may vary, but here’s the workflow that consistently produces quality content:

Step 1: Strategy and Topic Selection (100% Human)

I never let AI pick my topics. AI can suggest ideas, but the decision about what serves my audience and business goals? That’s human judgment. I spend time understanding what my audience actually needs, what gaps exist in current content, and what angle will differentiate our piece.

For a recent project with a B2B SaaS company, AI suggested writing about “productivity tips for remote teams.” Fine topic, but saturated. I chose instead to focus on “productivity measurement without surveillance in remote teams”—a more specific angle addressing a real tension I’d noticed in industry conversations. AI wouldn’t have made that nuanced choice.

Step 2: Research and Outlining (70% AI, 30% Human)

This is where AI shines. I use it to:

- Aggregate information from multiple sources

- Identify common themes and divergent viewpoints

- Suggest logical structure and flow

- Flag knowledge gaps I need to address

But I always add my own research—original interviews, personal experience, case studies, data from the client’s own analytics. AI can summarize what already exists on the internet, but it can’t conduct an interview with your customer or analyze your specific performance data.

For that remote team productivity piece, ChatGPT gave me a solid outline covering the obvious bases. I added a section on “trust-based alternatives to monitoring” based on conversations with three HR leaders I’d interviewed. That section became the most-shared part of the article.

Step 3: First Draft (80% AI)

I feed the AI my outline, research notes, specific points I want covered, and examples I want included. The prompt matters enormously—I’ve developed a template that specifies:

- Desired tone and style

- Target word count and section lengths

- Specific examples or data to incorporate

- Phrases or approaches to avoid

- Target audience and their knowledge level

The AI generates a draft. Sometimes it’s excellent. Sometimes it’s mediocre. Rarely is it terrible anymore (that was common in 2023 but much less so now).

Step 4: Heavy Human Editing (100% Human)

This is the make-or-break phase. I read the entire draft and:

- Add personality and voice quirks AI can’t replicate

- Insert specific examples and stories

- Fact-check everything (AI still hallucinates occasionally)

- Restructure sections that don’t flow well

- Add opening hooks and closing calls-to-action that actually work

- Remove repetitive phrasing AI loves (“it’s important to note,” “in today’s world,” etc.)

I’d estimate this takes 30-50% of the time writing from scratch would take, but it’s absolutely non-negotiable. Publishing raw AI output is a recipe for mediocrity at best and factual disasters at worst.

Step 5: SEO Optimization and Polish (50% AI, 50% Human)

I run the edited draft through Frase or Surfer SEO to check if I’ve covered important related topics and terms. AI tools are excellent at this technical optimization. But I make the final calls about what to add or cut—sometimes the “best practice” for SEO makes content worse for actual humans.

Step 6: Final Review and Publishing (100% Human)

I read the entire piece one more time as if I’m the target reader. Does it actually help someone? Is it interesting? Would I be proud to put my name on it? If yes, it publishes. If no, back to editing.

What Actually Improves vs. What Gets Worse

After managing dozens of blogs and publishing hundreds of AI-assisted articles, I can tell you with certainty what works and what doesn’t.

What Gets Better:

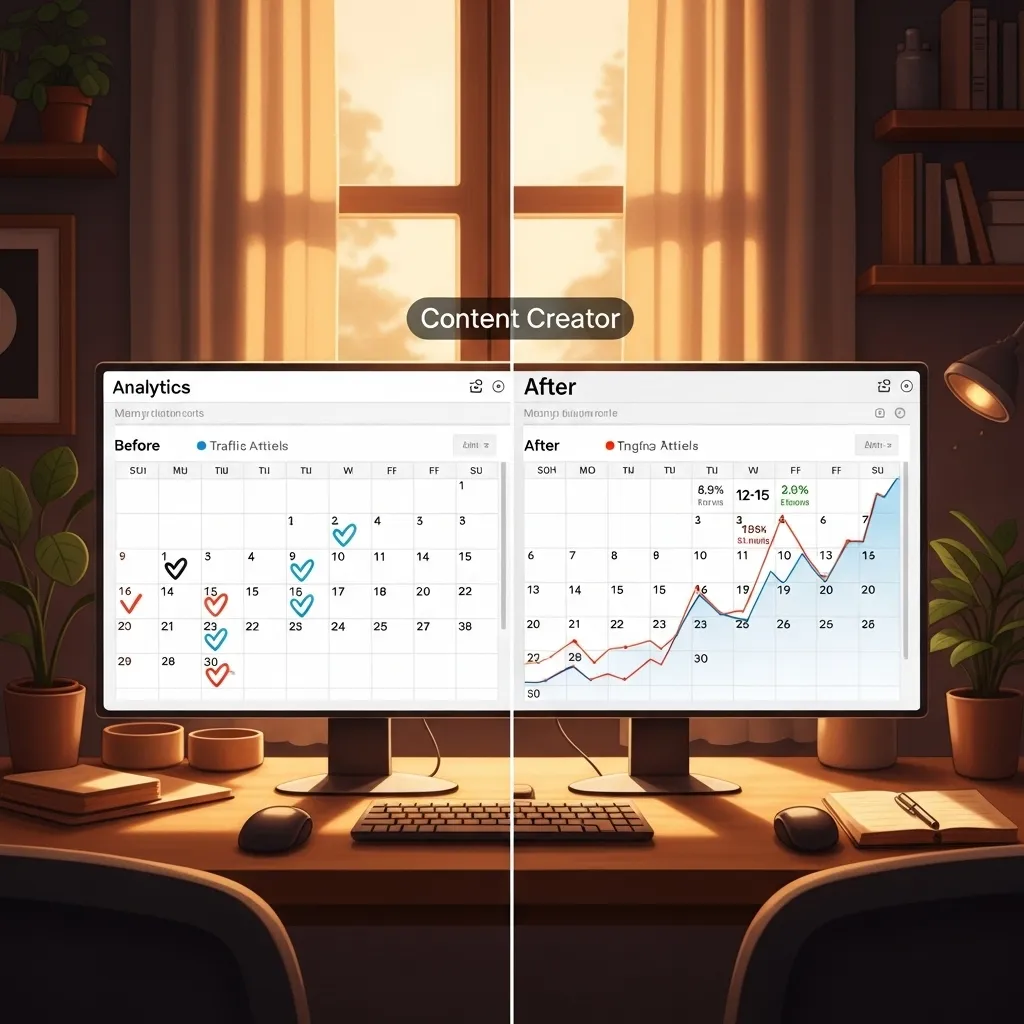

Publishing velocity: We went from 4-6 articles monthly to 12-15 without additional headcount. That’s meaningful for businesses competing for organic search visibility.

Consistency: No more “I’m just not feeling creative today” writer’s block. AI provides a starting point even when your brain feels like mush.

Research depth: AI can process and synthesize information from dozens of sources in minutes. My articles now typically incorporate more data points and perspectives than when I did everything manually.

SEO technical execution: AI tools are better than most humans at comprehensive keyword coverage, semantic relationships, and structural optimization.

Lower-funnel content: Product comparisons, feature explanations, how-to guides—this type of straightforward, information-dense content has actually improved with AI assistance. A recent comparison article between project management tools (AI-generated with my editing) outperforms the manually-written version we published two years ago.

What Gets Worse (If You’re Not Careful):

Authenticity and voice: AI can mimic style but struggles with genuine personality. The little verbal quirks, the unexpected metaphors, the subtle humor—these emerge less naturally from AI.

Thought leadership: AI is excellent at synthesizing existing information but terrible at original thinking. If you’re trying to present new frameworks, challenge conventional wisdom, or share genuinely novel insights, AI assistance drops significantly.

Emotional connection: Stories that move people, vulnerable admissions, triumphant moments—AI can write about these things but rarely captures the emotional resonance that makes readers feel something.

Cultural and contextual awareness: I’ve caught AI suggesting examples that were tone-deaf, references that didn’t land, or framings that would alienate portions of my audience. Human cultural awareness still matters immensely.

Specific expertise demonstration: Google’s algorithms increasingly favor content that demonstrates real expertise and experience. Generic AI content often lacks the specific details, nuanced takes, and experiential knowledge that signal genuine expertise.

The SEO Question Everyone Asks

“Will Google penalize AI content?”

Based on everything I’ve seen—including consultations with SEO experts and analysis of our own AI-assisted content performance—the answer is: not really, but also yes, kind of.

Google doesn’t penalize content because it’s AI-generated. They penalize low-quality, unhelpful content regardless of how it’s created. The problem is that a lot of AI content happens to be low-quality and unhelpful.

I manage a blog where approximately 80% of posts have significant AI involvement. Organic traffic has increased 340% over the past 18 months. Several AI-assisted articles rank in the top 3 for competitive keywords. Google hasn’t penalized us because the content is genuinely useful and demonstrates expertise.

However, I’ve also seen sites tank after flooding their blog with thin AI content. A competitor in the marketing automation space published 50+ AI-generated articles in two months—obvious keyword-stuffed, generic stuff with no real insight. Their organic visibility dropped nearly 60% in the following months.

The difference? We use AI as a tool to produce genuinely helpful content faster. They used it to game the system.

Google’s March 2024 helpful content update and subsequent algorithm refinements have gotten better at distinguishing between these approaches. They’re looking for what they call “experience and expertise signals”—specific examples, nuanced understanding, original data, unique perspectives. AI can help you create that if you guide it properly. It can’t create it autonomously.

My approach to AI content that ranks:

- Always add original research, interviews, case studies, or data

- Include specific examples from real experience (not made-up scenarios)

- Demonstrate nuanced understanding of tradeoffs and complexities

- Answer questions thoroughly, not superficially

- Update content regularly (AI drafts make updates faster, so do them)

- Interlink strategically to build topic authority

- Use AI for efficiency but human expertise for credibility

The Content Types Where AI Excels (And Where It Fails)

Not all blog content is created equal, and AI performance varies dramatically by content type.

Where AI Is Genuinely Excellent:

How-to guides and tutorials: Step-by-step instructional content is perfect for AI. I recently used Claude to write a comprehensive guide on “How to Set Up Google Analytics 4 for E-commerce.” The AI handled the technical steps logically and clearly. I added screenshots and specific troubleshooting tips from my experience. The final piece is better than what I would’ve written manually because AI structured the information more systematically than I would have.

Product comparisons and reviews: When you feed AI specs, features, and pros/cons, it organizes comparison content effectively. I’ve created several “Tool A vs. Tool B” articles where AI handled the feature matrix and basic comparison while I added hands-on testing insights and recommendations.

FAQ content: AI is fantastic at taking a list of questions and generating clear, concise answers. I created a 3,000-word FAQ guide for a client’s complex product. AI structured everything; I fact-checked and added specific examples.

News summaries and updates: If you’re covering industry news or policy changes, AI can summarize developments and explain implications competently. I use this for regular “what’s new in [industry]” roundups.

Foundational educational content: “What is [concept]” articles work well with AI. The explanation is usually clear and accurate. I enhance them with visuals, examples, and simplified analogies AI might not generate.

Where AI Struggles or Fails:

Opinion and commentary: AI can fake an opinion, but it’s obvious and hollow. Authentic takes on industry trends, controversial stances, predictions based on experience—these need human conviction. I wrote a piece arguing that most content marketing is waste (ironic, given the topic here), and there’s no way AI could’ve produced that authentic frustration and specific criticisms.

Personal stories and case studies: AI can write “a story about someone who succeeded with X,” but it lacks the specific details that make stories real and compelling. When I write about a specific client transformation, I include the weird quirky details—the CEO who hated social media, the intern’s idea that changed everything, the moment we almost gave up. AI doesn’t capture that richness.

Deeply technical or specialized content: For subjects requiring genuine expertise, AI produces superficial content unless you heavily guide it with your own knowledge. For a cybersecurity client’s blog, AI gave me a starting framework, but I essentially rewrote every technical section because the accuracy and specificity needed to be perfect.

Humor and satire: AI has the comedic timing of a DMV employee. It can attempt jokes, but they usually land with a thud. Any content where humor is central needs to be human-driven.

Vulnerable or emotionally complex topics: I write occasionally about mental health in entrepreneurship, burnout, and failure. AI can write words about these topics, but they feel plastic and empty. Real vulnerability requires human courage.

The Ethical Minefield (And How I Navigate It)

I’ve had multiple conversations with peers about whether AI blog writing is “cheating” or deceptive. The ethics are genuinely complex.

Disclosure: Should you tell readers that AI helped write the content? I’ve landed on selective disclosure. For most blog content, I don’t explicitly state “AI helped write this” any more than I’d previously stated “spell-check corrected this” or “an editor improved this.” AI is a tool in the production process.

However, for content where authority and personal expertise are the entire point—opinion pieces, expert commentary, personal essays—I either write it entirely myself or make the AI involvement clear if it helped with research or structure.

Authorship and accountability: I put my name (or my client’s name) on AI-assisted content only when I’ve reviewed it thoroughly enough to stand behind every claim. If an error makes it through, that’s on me, not the AI. I fact-check religiously and never publish content I haven’t read completely.

Displacement and labor: AI blog writing eliminates some freelance opportunities. I used to hire junior writers for routine blog posts. Now I don’t need to for most content. That’s economically efficient and also kind of shitty for people trying to break into content writing. I don’t have a great answer for this beyond being honest about the reality.

Quality and pollution: The internet is getting flooded with mediocre AI content. I’m contributing to that problem if I’m not careful. My personal standard: I only publish content I believe genuinely helps someone. If it’s just existing to occupy digital space or chase keywords, I don’t publish it, regardless of how easy AI makes production.

Originality and plagiarism: AI occasionally produces content suspiciously similar to existing published pieces. I run everything through plagiarism checkers (Copyscape and Grammarly) and Google portions of text to check for duplication. I’ve caught and rewritten sections that were too close to source material.

The Productivity Math: Real Numbers

Let me give you concrete numbers from my own work because the productivity claims around AI are often exaggerated.

Before AI (2022):

- Average time per 1,500-word blog post: 6-8 hours

- Monthly output (solo): 12-15 posts

- Client capacity: 4 clients max

- Burnout level: high

With AI (2026):

- Average time per 1,500-word blog post: 2-4 hours

- Monthly output (solo): 25-30 posts

- Client capacity: 7 clients comfortably

- Burnout level: moderate (different stresses, like quality anxiety)

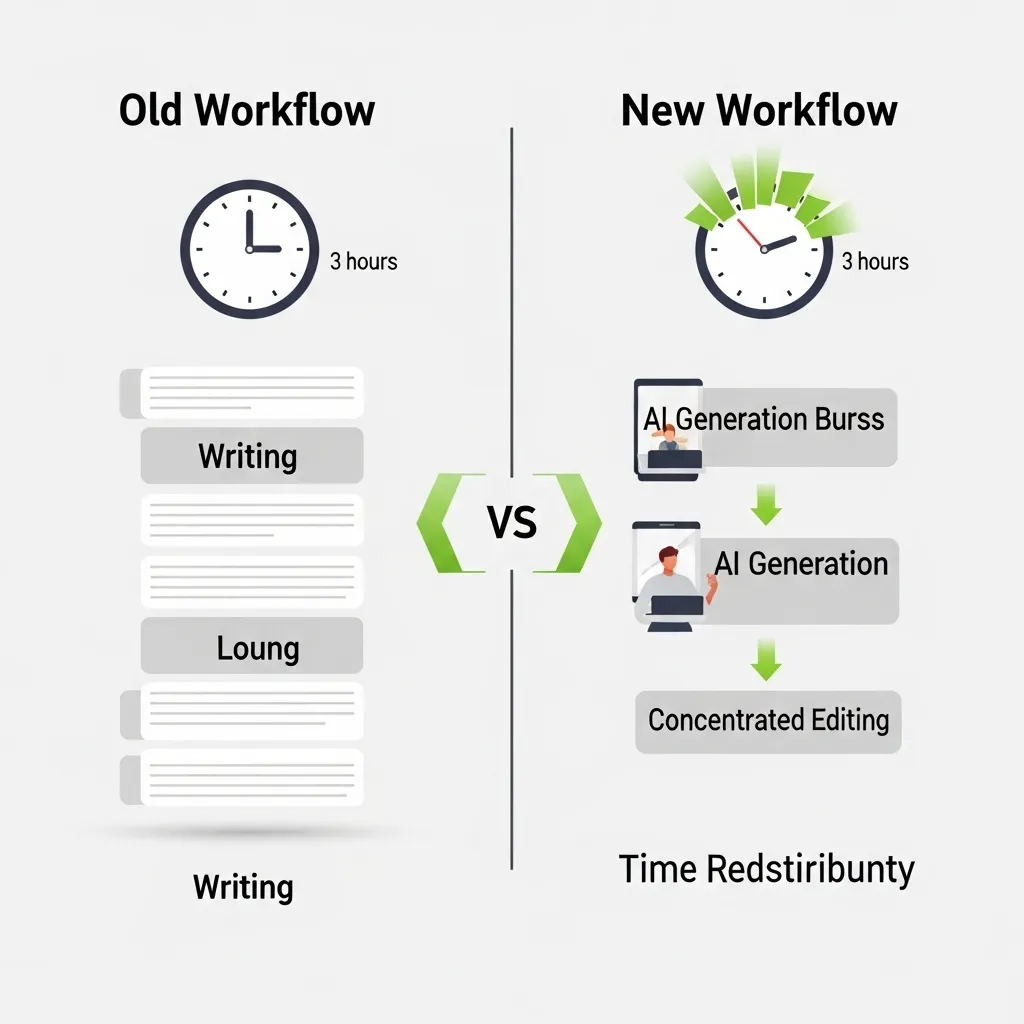

That’s not 10x productivity like some AI evangelists claim, but it’s roughly 2-3x for me. The time savings are real but not magical.

Where the time goes now:

- 30 minutes: Topic selection, research, outlining

- 20 minutes: AI prompt engineering and generation

- 90 minutes: Heavy editing, adding examples, personality injection

- 20 minutes: SEO optimization and final polish

- 10 minutes: Formatting, image selection, publishing

Compare that to my previous workflow:

- 45 minutes: Research and outlining

- 180 minutes: Writing from scratch

- 60 minutes: Editing and revision

- 15 minutes: SEO check

- 10 minutes: Publishing

The middle writing phase collapsed from 3 hours to 20 minutes of AI generation plus 90 minutes of editing. That’s where the productivity gain lives.

The Quality Question: Am I Producing Better Content?

Honest answer: It depends on the content type and how much I care.

For routine, informational content where I’m not trying to be brilliant—just helpful and clear—AI assistance has probably improved quality. The content is more comprehensive, better structured, and more thoroughly researched than what I’d produce under time pressure.

For content where I’m trying to stand out, demonstrate unique expertise, or emotionally connect with readers, I don’t think AI has improved quality. In fact, over-relying on it would make the content worse. But it still saves time on the structural groundwork.

The real risk is regression to the mean. AI pushes everything toward “pretty good.” That’s great if your baseline was “not great,” but problematic if you’re trying to produce exceptional work.

I’ve noticed that my most successful content—the pieces that get shared widely, cited by other creators, or lead to business opportunities—is still the stuff I write more manually, with minimal AI involvement. AI helps me be more productive with the 80% of content that’s solid but not spectacular. The 20% that really matters still requires full human creative effort.

Common Mistakes I’ve Made (So You Don’t Have To)

Over-trusting factual accuracy: Early on, I published an AI-written piece about marketing regulations that confidently cited a law that didn’t exist. A reader called it out, and I looked like an idiot. Now I fact-check everything, especially statistics, quotes, and regulatory/legal information. AI hallucinates less than it used to, but it still happens.

Losing my voice: I went through a phase where I was editing AI content so lightly that my writing started sounding generic. Multiple people mentioned my blog felt “different” (not in a good way). I had to consciously rebuild voice and personality into the editing process.

Publishing too much mediocre content: When AI made production easy, I fell into a “more is better” trap. I was publishing 20 posts monthly, but many were forgettable. When I scaled back to 12-15 but spent more time making each piece genuinely good, engagement and results improved.

Ignoring the prompting learning curve: My first AI-generated blog posts were terrible because my prompts were terrible. “Write a blog post about email marketing” produces garbage. Learning to craft detailed, strategic prompts—specifying audience, angle, tone, structure, examples—took months but dramatically improved output quality.

Not adapting to each AI’s strengths: I initially used ChatGPT for everything. When I started matching tasks to the right tool (Claude for nuanced topics, Jasper for voice consistency, Writesonic for SEO-focused pieces), quality improved noticeably.

Skipping the human review: I got lazy a few times and published with only minimal skim-reading. Each time, I later found weird phrasings, repetitive sections, or awkward transitions I would’ve caught with proper review. There are no shortcuts on the final human QA step.

Looking Ahead: Where This Is Going

Based on the trajectory I’m seeing, here’s where AI blog writing is headed:

Personalization at scale: Emerging tools are starting to generate multiple versions of the same article optimized for different audience segments. Same core information, different framings, examples, and tone depending on whether the reader is a beginner or expert, B2B or B2C, technical or business-focused.

Voice cloning getting really good: Tools that learn your writing style are improving rapidly. Within a year or two, I expect AI will be able to generate first drafts that sound convincingly like me with minimal editing needed. That’s simultaneously exciting and unsettling.

Better integration of original research: AI tools are starting to integrate with data analytics, CRM systems, and proprietary databases. Imagine AI drafting a blog post that automatically pulls in relevant stats from your Google Analytics, quotes from your customer reviews, and performance data from your product—all without you manually gathering that information.

Real-time optimization: Some tools are testing continuous optimization where AI monitors how readers interact with content and suggests real-time revisions to improve engagement or conversions. Your blog post would evolve based on how it’s performing.

Multimedia content generation: AI blog writing is expanding beyond text to suggest images, generate videos, create infographics, and produce audio versions—all from a single content brief.

The trendline is clear: AI will handle more of the production process with less human involvement needed. The question is whether that produces better content or just more content.

My Honest Take After Three Years

AI has fundamentally changed how I write blogs, and I can’t imagine going back. The productivity gains are real, and for certain content types, the quality is as good or better than what I’d produce manually.

But—and this is important—AI is a tool, not a replacement for thinking, expertise, or creativity. The blogs that succeed with AI are those where humans drive strategy, inject genuine expertise and personality, and maintain rigorous quality standards. The blogs that fail are those treating AI as a content vending machine where you insert keywords and receive publishable posts.

If you’re considering automating blog writing with AI, my advice:

Start small with low-stakes content. Learn prompting skills. Develop a workflow that preserves your quality standards. Never publish without thorough human review. Focus on using AI to amplify your expertise, not replace it.

The bloggers I worry about are those who’ve never developed strong writing skills and think AI means they don’t need to. AI makes good writers much more productive. It doesn’t make non-writers into writers.

Three years in, I’m producing more content with less stress, but I’m also more aware of the need to preserve what makes content genuinely valuable: real expertise, authentic voice, original thinking, and genuine helpfulness. AI can assist with all of that, but it can’t create it from nothing.

The future of blog writing isn’t AI or humans. It’s AI and humans working together, each doing what they do best. I’m still figuring out exactly what that balance looks like, but that’s the journey every content creator is on right now.

Frequently Asked Questions

1. Can Google detect AI-written content, and will it hurt my SEO?

Google can likely detect AI-generated content through various signals, but their official position is that they don’t penalize content simply because AI created it—they penalize low-quality, unhelpful content regardless of origin. In practice, I’ve seen plenty of AI-assisted content rank well when it genuinely serves search intent and demonstrates expertise. The key is ensuring AI-generated content meets quality standards: comprehensive, accurate, well-structured, and genuinely helpful. Don’t try to game the system with mass-produced thin content. Use AI to help create genuinely valuable content faster, add original insights and examples, and maintain high editorial standards. Sites that do this successfully are seeing normal or improved SEO performance.

2. How much should I expect to pay for AI blog writing tools?

Pricing varies wildly depending on what you need. Basic tools like ChatGPT start at $20/month and can handle most blog writing tasks if you’re willing to do more manual prompting and editing. Mid-tier specialized tools (Jasper, Writesonic, Copy.ai) typically run $50-100/month for individual plans with reasonable usage limits. Professional-grade tools with advanced features, brand voice training, and SEO optimization (like the upper tiers of Jasper or Koala Writer) can cost $200-500/month. For most bloggers starting out, I’d recommend beginning with ChatGPT Plus or a $50-75/month specialized tool to test whether AI blog writing fits your workflow before investing heavily.

3. Should I disclose to readers that AI helped write the content?

This is genuinely debatable, and different content creators have different approaches. My personal practice: I don’t routinely disclose AI assistance for standard informational blog posts, treating it like any other production tool (editors, research assistants, etc.). However, I do disclose or avoid heavy AI use for content where personal authority is central—opinion pieces, expert commentary, personal experiences. Some publications now require disclosure, and regulations may eventually mandate it in certain contexts. The ethical standard I follow: If knowing AI helped create the content would make readers question its value or credibility, either disclose it or ensure the content demonstrates enough human expertise that it wouldn’t matter. Transparency builds trust, so when in doubt, err toward disclosure.

4. What’s the biggest mistake people make when using AI to write blogs?

The single biggest mistake is publishing raw AI output with minimal review. I’ve seen this repeatedly—someone generates content with ChatGPT or another tool, does a quick skim for obvious errors, and publishes. The result is generic, sometimes inaccurate, and often repetitive content that damages credibility rather than building it. AI should generate first drafts or assist with research and structure, but human expertise, fact-checking, personality injection, and strategic editing are absolutely essential. Related mistakes include: not fact-checking AI claims, using AI for topics where you lack expertise to guide it properly, optimizing for quantity over quality, and not developing proper prompting skills. Treat AI as a junior assistant who needs supervision, not as a replacement for your own thinking and expertise.

5. How long does it take to get good at AI blog writing and see real productivity gains?

In my experience, expect about 2-3 months of consistent practice before you’ve developed efficient workflows and prompting skills. The first few weeks are typically frustrating—the output quality is inconsistent, you’re not sure how to prompt effectively, and editing AI drafts might take as long as writing from scratch. Gradually, you learn what works: how to brief the AI, which tools excel at which content types, how much human involvement different post types need, and how to edit efficiently. Most people I’ve talked to see meaningful productivity gains (30-50% time savings) within the first month and more substantial gains (50-80% for certain content types) by month three. The key is treating it as a skill to develop, not a magic solution. Invest time experimenting with different tools, refining your prompts, and finding the workflow that fits your style.